Reflection AI isn’t just raising capital. It is attempting to redefine who controls AI — and where that control lives.

Reflection AI, an open-weight foundation model startup founded by former Google DeepMind researchers, is reportedly raising $2.5 billion at a $25 billion pre-money valuation — signaling a structural shift from centralized AI APIs toward sovereign, locally controlled AI infrastructure. Backed by players like Nvidia and potentially JPMorgan Chase, the company is positioning itself not as another model provider, but as a foundational layer in a new global AI architecture where control, rather than capability alone, becomes the defining variable.

This is not another valuation spike. It is a reallocation of power inside the AI stack.

The Structural Divide: Open Sovereign vs. Closed API

For the past several years, AI development has been dominated by a single paradigm built around centralized, closed, API-driven systems, where access to intelligence is mediated through proprietary platforms controlled by a small number of companies.

Companies like OpenAI and Anthropic have built powerful models, but they have also centralized access, data flow, and control within tightly managed infrastructure layers that enterprises can use but not fully own.

That model is now being challenged.

Reflection AI represents the opposite architecture:

Open-weight, locally deployable, sovereign systems

This is not simply a technical distinction. It is a control-layer divergence that reshapes how intelligence is accessed, deployed, and governed across organizations and nations — a fragmentation trend already visible in The Global AI Map Is Fragmenting — Who Controls the Next Layer of Intelligence.

- closed models → access through APIs

- open sovereign models → ownership through deployment

In one system, intelligence is rented. In the other, it is owned.

Why This Shift Is Happening Now

The transition toward sovereign AI is not being driven by developers or startups alone, but increasingly by institutions, governments, and regulated industries that require deeper control over how data and intelligence systems operate within their environments.

Closed AI systems introduce a fundamental constraint:

Data must leave your environment

For enterprises, this creates compliance and security challenges. For governments, it introduces sovereignty risks that extend into national security and strategic autonomy.

Highly regulated sectors such as banking, defense, and healthcare cannot route sensitive data through centralized external infrastructure without introducing unacceptable exposure.

At the same time, geopolitical fragmentation is accelerating.

Countries no longer want dependency on:

- U.S. API providers

- Chinese open-source ecosystems

They want control.

The Strategic Positioning: AI as Sovereign Infrastructure

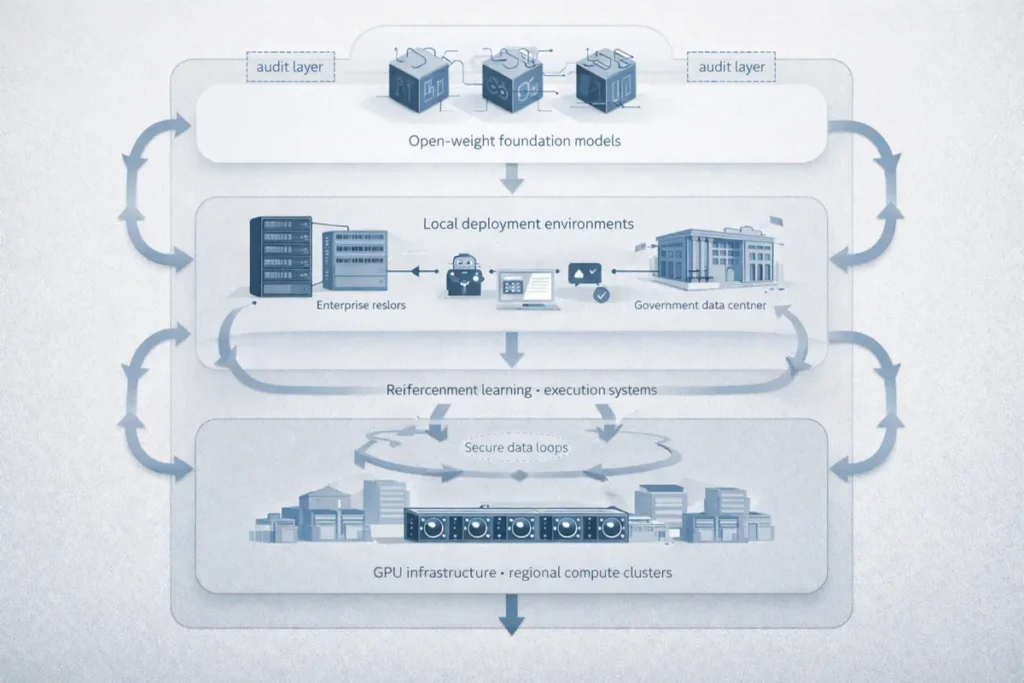

Reflection AI is built specifically to meet this demand by designing models that can be downloaded, audited, modified, and deployed directly within local environments, enabling organizations to operate AI systems without relying on external infrastructure providers.

This enables:

Air-gapped AI systems

Where:

- data never leaves the organization

- models run on sovereign compute

- behavior can be audited and controlled

This is not a feature enhancement layered on top of existing systems. It is a foundational requirement for the next phase of AI adoption, particularly in environments where control and compliance are non-negotiable — a pattern increasingly aligned with sovereign infrastructure strategies such as Mistral’s Sovereign AI Infrastructure Play in Europe.

Why Nvidia Is Backing This Model

Nvidia’s involvement reflects a deeper strategic alignment with the direction of the AI infrastructure market, where the location of compute demand becomes as important as the models themselves.

If AI remains API-driven:

Compute demand centralizes within hyperscaler cloud platforms such as Azure and AWS.

If AI becomes sovereign:

Compute demand decentralizes across governments, enterprises, and regional data centers.

This leads to a fundamentally different infrastructure outcome:

- governments build sovereign data centers

- enterprises invest directly in GPU infrastructure

- regional compute ecosystems expand

Backing Reflection AI is effectively a bet on global GPU demand expansion beyond hyperscalers, a thesis consistent with the broader compute-layer shift outlined in Nvidia-Backed Nscale Raises $2B to Build AI Compute Backbone.

This is Nvidia’s pick-and-shovel strategy operating at geopolitical scale.

The Real Product: From Chat to Autonomous Execution

Reflection AI is not optimizing for conversational interfaces or incremental improvements in chatbot performance, but instead is applying reinforcement learning and search-based techniques to enable systems that can operate autonomously over longer time horizons.

Its founding team, including AlphaGo and AlphaZero architect Ioannis Antonoglou, is focused on building systems capable of structured problem-solving rather than reactive response generation.

Long-horizon autonomous execution

Instead of simply answering queries, these systems are designed to:

- plan

- write

- test

- debug

- iterate

Particularly within software development environments where tasks require sustained reasoning across multiple steps.

This represents a different class of AI system.

Not conversational. Agentic.

The Asian Compute Layer: Infrastructure as Strategy

The reported $6.7 billion data center partnership in South Korea represents more than capacity expansion; it reflects a deliberate effort to anchor AI infrastructure within strategically aligned regions outside both China’s regulatory sphere and complete reliance on U.S. hyperscalers.

This creates a trusted compute hub outside China, while also introducing an alternative to fully centralized U.S. infrastructure models.

For allied nations, this provides:

- localized infrastructure

- regulatory alignment

- strategic independence

AI is no longer just software.

It is physical infrastructure tied directly to geopolitical alignment and control over compute resources.

The JPMorgan Signal: Enterprise Validation at the Highest Level

If Nvidia represents supply-side validation of the sovereign AI model, JPMorgan represents demand-side validation from one of the most tightly regulated and risk-sensitive sectors in the global economy.

Banks operate under constraints that require absolute control over data flows, auditability of systems, and resilience against external dependencies.

Their interest signals one clear conclusion:

Closed AI systems are insufficient for core financial operations

They require systems that can be deployed locally, audited internally, and controlled without reliance on external APIs.

This is where sovereign AI becomes mandatory.

Not optional.

The Hidden Layer Driving the $25B Valuation

The valuation is not based on current revenue or near-term monetization potential, but on the expectation that Reflection AI could control a critical infrastructure layer as the AI stack expands and reorganizes around sovereignty and execution.

Investors are pricing in three converging forces:

1. Sovereign AI Demand

Governments and enterprises increasingly require localized, controlled AI systems.

2. Decentralized Compute Expansion

AI infrastructure is expanding beyond hyperscaler clouds into distributed environments.

3. Agentic System Evolution

AI systems are shifting from interaction-based models toward execution-based systems capable of completing tasks autonomously — a transition aligned with broader execution-layer shifts explored in Why the Next AI Breakthrough Is Expertise, Not Just Models.

Reflection sits at the intersection of all three.

The Competitive Reframe

This is not a direct competition between individual companies.

It is a competition between two different models of how AI will be structured globally.

Two AI world models

Closed API world:

- centralized

- scalable

- controlled by a small number of providers

Sovereign world:

- decentralized

- fragmented

- controlled by nations and enterprises

Both will exist.

But they serve fundamentally different power structures.

The Constraint Layer

The opportunity is significant, but the constraints are equally structural and difficult to overcome at scale.

Open models risk commoditization, sovereign deployments introduce operational complexity, training frontier systems remains capital intensive, and ecosystem fragmentation may slow adoption across markets.

Most critically:

Performance must match or exceed closed systems.

Without that, sovereignty alone is not sufficient to drive adoption.

Why This Matters Now

AI is entering a new phase in which the defining question is no longer who can build the most capable models, but who controls how those models are deployed, governed, and integrated into real-world systems.

The first phase of AI was defined by capability.

The next phase is defined by:

Control

And that represents a fundamentally different competitive dynamic.

The Structural Shift: From Intelligence to Infrastructure

The AI stack is expanding beyond model development into a layered system where intelligence, orchestration, and infrastructure each represent distinct sources of value and control.

- models → intelligence

- systems → orchestration

- infrastructure → control

Reflection is positioning itself at the infrastructure layer — reinforcing the broader stack reordering described in The AI Infrastructure Split — Who Controls the Next Layer of AI.

That is where long-term power accumulates.

What Reflection AI Is Actually Building

Reflection is not simply building an AI company or a model provider; it is attempting to construct a sovereign operating layer for artificial intelligence where models are owned rather than rented, compute is localized rather than centralized, and intelligence is controlled rather than outsourced.

In this system:

- models are owned, not rented

- compute is local, not centralized

- intelligence is controlled, not outsourced

This is not a product.

It is a strategic layer of global infrastructure.

Editorial Close

The AI race is no longer defined solely by who can build more capable systems, but by who determines where those systems run, who controls them, and how they are integrated into the structures that govern economies and institutions.

Because those decisions ultimately determine:

Who controls intelligence. Where it operates. And who benefits from it.

Reflection AI is entering that battle directly.

Not by competing on outputs.

But by redefining where AI power resides.

Research Context

Based on funding disclosures, investor signals, DeepMind founder backgrounds, Nvidia strategy alignment, and emerging geopolitical dynamics in sovereign AI infrastructure.

Editorial Note

This analysis reflects independent interpretation of publicly available information and structural shifts in the global AI ecosystem.