Blackstone’s backing signals more than capital scale. It tests whether infrastructure founders can out-execute demand.

India does not lack AI startups.

It lacks sovereign-scale compute.

While application-layer founders optimize growth loops and engagement metrics, infrastructure founders optimize utilization curves, megawatt agreements, and capital deployment against physical constraints. The difference is not stylistic. It is structural.

This week, Neysa stepped directly into that structural gap. It is positioning itself not as another AI startup, but as India’s domestic compute backbone.

Backed by Blackstone and a syndicate including Teachers’ Venture Growth, TVS Capital, 360 ONE Assets, and Nexus Ventures, Neysa secured backing enabling up to a $1.2 billion capital raise. Roughly $600 million comes from equity commitments, with additional debt financing planned.

The enterprise valuation sits near $1.4 billion.

Capital volume is not the core signal.

The deeper signal is that India’s AI push is entering its infrastructure phase — a shift that mirrors the broader capital repricing we analyzed in The Week AI Capital Repriced Itself at $380B and 27x Revenue.

Infrastructure Is a Different Founder Game

Application startups scale through iteration. Infrastructure startups scale through precision.

Neysa’s ambition is not incremental SaaS expansion. It is the deployment of more than 20,000 GPUs across enterprise and government clients, positioning itself as a domestic AI acceleration layer at a moment when global compute demand is tightening.

That changes founder psychology. Infrastructure founders are not rewarded for narrative velocity. They are judged by capital discipline. In this layer, charisma cannot outrun cash flow.

Infrastructure leaders do not pivot features. They restructure capital exposure. Idle GPUs are not abstract metrics. They are balance sheet liabilities. Underutilization compounds cost before it compounds revenue.

Execution risk here is mechanical, not theoretical.

The Numeric Density Behind the Bet

Consider the capital structure in context:

• Up to $1.2B total raise capacity

• ~$600M equity commitment

• ~$1.4B enterprise valuation

• 20,000+ GPU deployment target

• Multi-hundred-billion-dollar hyperscaler capex operating globally

• India’s sovereign AI allocations expanding under national digital initiatives

This is not seed-stage experimentation. It is asset-backed positioning.

Most Indian AI funding cycles historically centered around application logic. Previous large rounds in India’s AI ecosystem largely remained sub-$200M and focused on SaaS or vertical automation layers. This raise signals capital confidence in infrastructure monetization rather than software arbitrage.

That distinction marks a structural shift.

Why Blackstone Changes the Equation

Blackstone is not underwriting a prototype.

Private equity participation introduces expectations around capital discipline, asset utilization, and predictable revenue layering. Infrastructure investors prioritize cash flow visibility over narrative expansion.

For Neysa’s founder, this shifts incentive structure.

Growth is no longer measured only by customer acquisition. It is measured by utilization rates, enterprise contracts, power purchase agreements, and long-term hosting commitments.

That is a harder operational regime.

The Founder Risk Layer

The founder tension is straightforward.

Deploying GPUs at scale assumes sustained enterprise demand. Demand curves, however, are volatile. Model efficiency improvements reduce compute intensity over time. Open-source diffusion shifts workload distribution. Cloud providers adjust pricing strategies aggressively.

Infrastructure founders must model against multiple scenarios simultaneously:

• Peak demand utilization

• Conservative enterprise adoption

• Pricing compression from hyperscalers

• Regulatory shifts in data localization

Unlike application founders who can pivot feature sets, infrastructure founders pivot capital structures.

Debt servicing schedules do not respond to product iteration speed.

This is where leadership is tested.

The Execution Math Beneath the Narrative

Beneath the funding headline sits execution arithmetic.

High-performance AI GPUs can range widely in cost depending on configuration and supply conditions. At scale, procurement and deployment represent substantial capital commitment before revenue realization.

Utilization sensitivity becomes decisive.

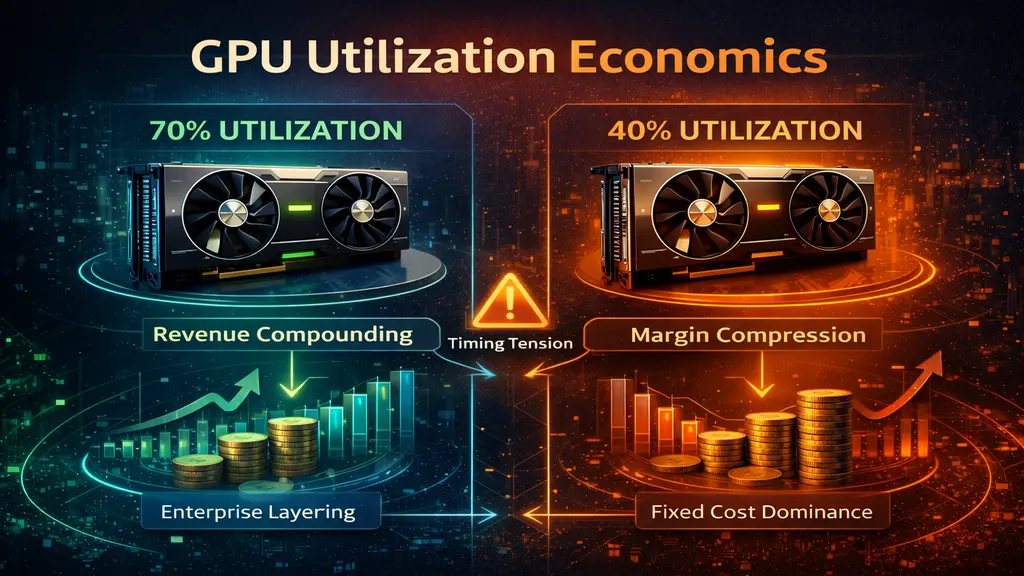

At 70 percent utilization, infrastructure economics can stabilize and compound through enterprise layering. At 40 percent utilization, fixed costs dominate and margin compression accelerates. The delta between those two numbers determines whether infrastructure capital compounds or constrains.

Debt introduces timing tension.

Capex deployment must align with revenue ramp. If enterprise onboarding lags hardware activation, balance sheet stress emerges before pricing power materializes.

Infrastructure margin compression is cyclical, not theoretical. Hyperscaler pricing flexibility can reset expectations quickly. Model efficiency gains can reduce compute requirements per workload.

Founders in this layer operate less like SaaS builders and more like capital allocators managing industrial assets.

Competitive Context: Domestic vs Global Compute

India’s AI ambition is growing inside a geopolitical environment where compute access increasingly carries strategic weight.

Global hyperscalers continue deploying hundreds of billions in capital expenditure annually, reinforcing the geographic fragmentation of AI infrastructure detailed in The Global AI Map Is Fragmenting: How Each Continent Is Betting on a Different AI Future. U.S. frontier model providers are consolidating capital concentration. China accelerates domestic model iteration. Europe pushes sovereign infrastructure containment.

In that landscape, India faces a structural choice: remain dependent on foreign compute layers or cultivate domestic capacity — a dilemma similar to the sovereign infrastructure strategy explored in Mistral AI’s Sovereign Infrastructure Bet in Europe.

Neysa is attempting to operate inside the second path.

Whether it becomes a durable infrastructure layer depends less on headline funding and more on execution discipline.

Strategic Implications for Founders

This round redefines what AI ambition looks like in India.

For founders watching from the application layer, the message is subtle but clear:

Infrastructure scale is returning to center stage.

That introduces new archetypes:

• The infrastructure allocator

• The compute orchestrator

• The sovereign capacity builder

These founders do not optimize for virality. They optimize for structural embedment inside economic systems.

Capital at this scale implies long-term intent.

Long-Term Systemic Takeaway

If Neysa executes effectively, India reduces reliance on foreign-hosted compute infrastructure. Domestic enterprises gain lower-latency access. Government AI initiatives anchor inside national jurisdiction.

If execution falters, capital intensity becomes constraint rather than moat.

Infrastructure does not forgive misallocation.

But it rewards discipline disproportionately.

India’s AI future will not be determined only by who builds the most capable models. It will be shaped by who controls the compute substrate beneath them.

Neysa’s founder is operating at that substrate layer.

That is a higher leverage game.

And a far less forgiving one.

Research Context: Funding structure, valuation range, and investor participation synthesized from publicly reported financial disclosures and major business media coverage of Neysa’s capital raise, alongside broader AI infrastructure investment trends.

Editorial Note: This article reflects independent analysis of publicly reported information and broader AI ecosystem capital dynamics.