Inside the coordination layer determining what enterprise AI knows before it acts

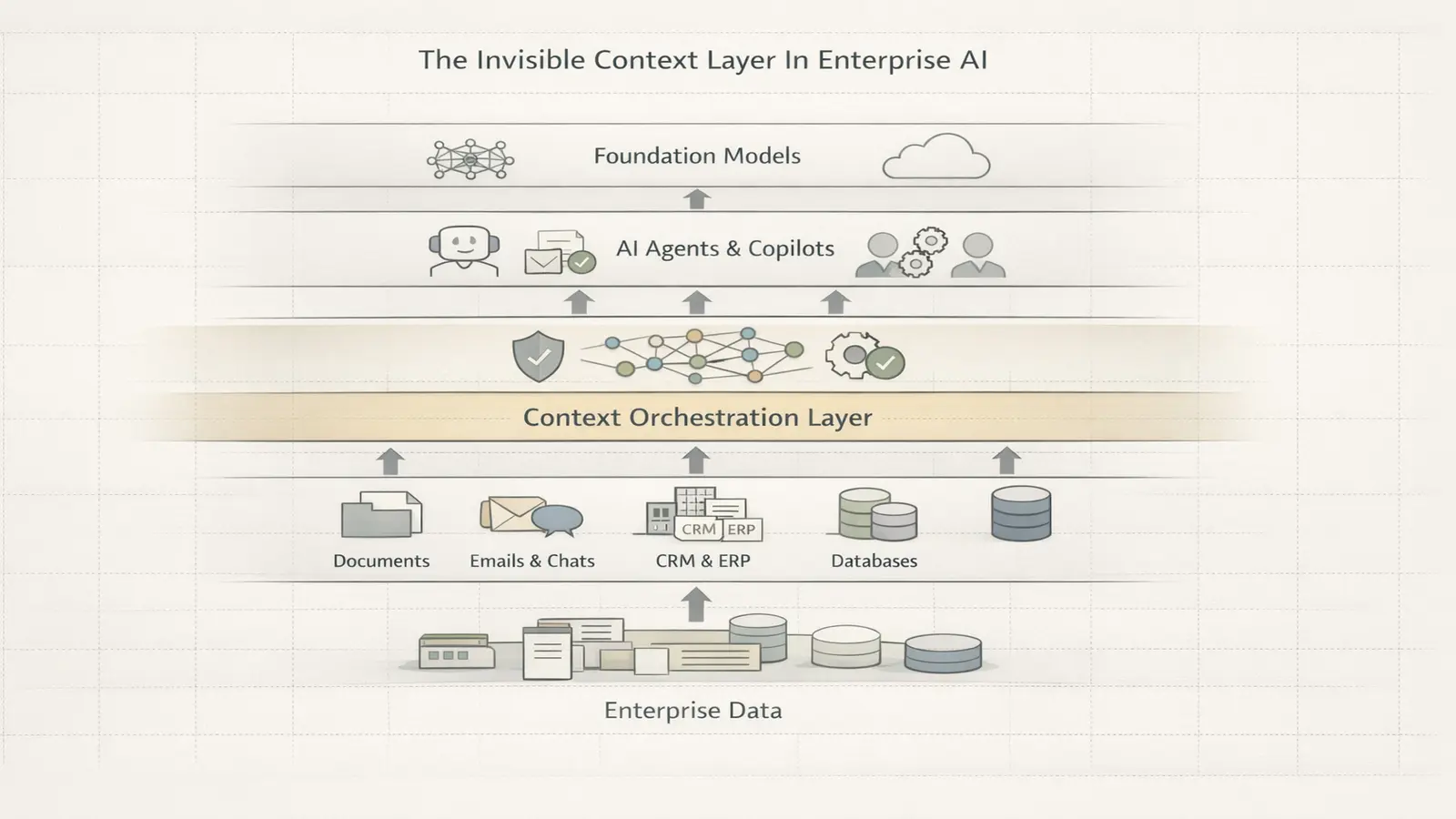

Enterprise AI adoption is revealing a structural shift rather than a simple capability race. While frontier models continue improving, enterprises face a more practical constraint: how intelligence accesses internal knowledge, respects permissions, and executes workflows reliably.

Glean operates inside that shift, positioning itself as an infrastructure layer that determines how intelligence interacts with enterprise data rather than how intelligence is produced.

The company sits within a rapidly emerging category: context orchestration platforms that connect fragmented enterprise systems into a unified runtime layer. As organizations deploy multiple models simultaneously, the coordination of knowledge retrieval is becoming as strategically important as the models themselves.

Glean’s architecture reflects this evolution. Instead of building foundation models, it builds the connective tissue that allows those models to function inside real organizations.

This is not search. It is infrastructure. This shift mirrors how enterprise AI infrastructure competition is moving toward deployment control rather than model capability.

From Enterprise Search to Context Orchestration

Enterprise search historically indexed documents without understanding relationships. Modern AI requires something different: retrieving the right context at the exact moment intelligence acts.

Glean’s core thesis is that enterprise knowledge is distributed across SaaS tools, communication platforms, and internal systems. Its platform constructs a permission-aware knowledge graph mapping how information flows across the organization.

That graph enables:

- Context retrieval for assistants

- Workflow-aware recommendations

- Agent grounding across tools

Retrieval shifts from static indexing to runtime coordination. In infrastructure terms, Glean sits between storage and intelligence.

Why Context Became the Bottleneck

As model performance converges, differentiation shifts toward data access. Enterprises rarely operate a single AI provider. Instead, they run heterogeneous stacks shaped by cost, compliance, and task specialization.

This creates a new bottleneck: context availability.

Without unified retrieval, agents hallucinate, duplicate work, or operate without organizational awareness. Glean addresses this by standardizing how knowledge is discovered, ranked, and delivered to downstream systems.

The platform effectively becomes a control plane for organizational context, determining what intelligence sees before it acts. This mirrors the broader emergence of AI control planes as governance infrastructure shaping enterprise deployment decisions. Large enterprise deployments increasingly connect dozens of internal tools through context platforms, with usage shifting from search queries toward embedded workflow triggers, signaling that retrieval infrastructure is becoming operational rather than exploratory.

Real deployments illustrate this shift: onboarding assistants pulling HR policies, support agents resolving tickets through contextual retrieval, and engineering copilots grounding code suggestions in internal documentation across fintech firms, SaaS companies, and global consultancies.

Over the next 24–36 months, this shift is expected to redefine how enterprise AI stacks are designed.

The Invisible Infrastructure Layer: From Retrieval to Runtime Coordination

Industry discourse increasingly describes a foundational enterprise AI middleware layer operating beneath enterprise AI interfaces. This invisible infrastructure layer enables models to interact with organizational data, workflows, and governance frameworks at scale.

Rather than user-facing experiences, the layer performs critical tasks:

- Secure data retrieval with permission enforcement

- Knowledge graph construction for organizational context

- Multi-model orchestration across providers

- Governance to reduce hallucinations and ensure compliance

- Traceability for audits and monitoring

The shift reflects enterprise AI’s move from experimentation toward production infrastructure, where integration depth and reliability outweigh raw capability.

The most valuable enterprise AI companies may never build models.

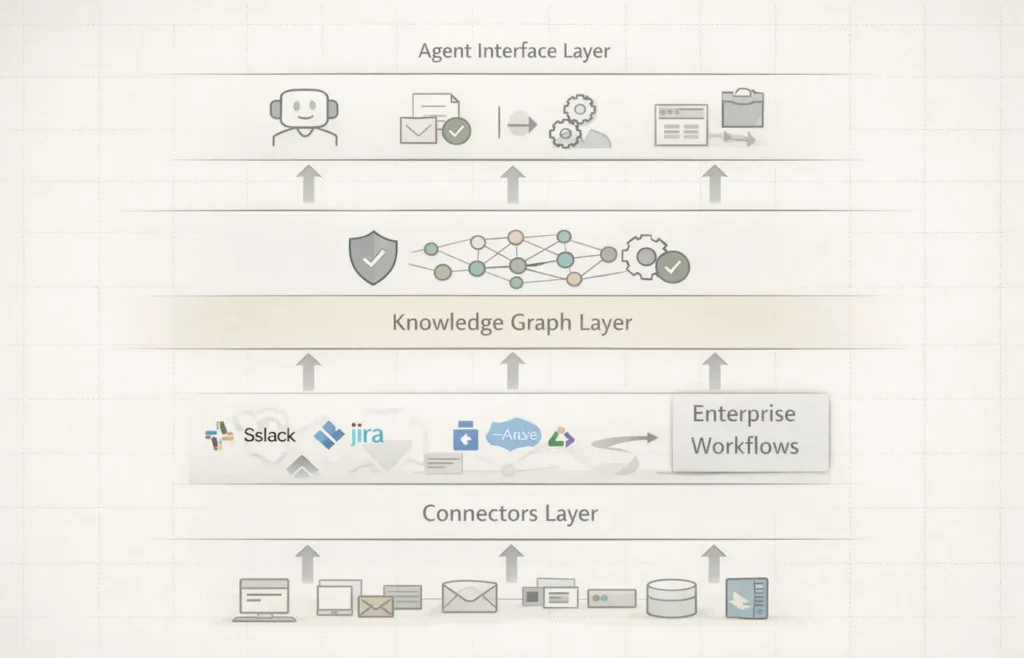

The Agentic Shift Is Making the Layer Critical

As enterprises adopt agentic architectures, coordination complexity increases. AI systems now execute sequences of tasks across tools rather than isolated queries.

This introduces new infrastructure requirements:

- Persistent agent memory

- Orchestration logic for task sequencing

- Semantic layers enabling contextual reasoning

- API-first embedding into legacy systems

Modern context platforms increasingly integrate connectors across Slack, Jira, Salesforce, Google Drive, GitHub, and internal databases, allowing agents to operate across workflows rather than isolated knowledge silos.

The invisible layer becomes the runtime environment for organizational intelligence as agents transition from assistants to operators inside enterprise systems.

Why Glean Fits the Category

Founded in 2019 by former Google engineers including CEO Arvind Jain, Glean evolved from enterprise search into context orchestration middleware.

Its platform abstracts models from enterprise systems, enabling organizations to deploy multiple AI providers against a unified context layer. The result is neutrality, historically a strong infrastructure advantage.

Capabilities converge across retrieval, orchestration, governance, and workflow execution. Glean positions itself as the intelligence layer beneath the interface.

Architecture: The Runtime Context Layer

Glean’s architecture can be understood as a three-layer stack:

- Connectors layer integrates enterprise tools

- Knowledge graph layer maps relationships and permissions

- Agent interface layer exposes context to assistants

This layered design allows independence from any single model provider. Enterprises adopting multiple copilots increasingly require that neutrality.

The model becomes interchangeable. The context layer does not. Enterprise AI stacks increasingly resemble layered operating systems where context orchestration functions as middleware between data storage and agent execution, defining how intelligence is routed rather than how it is generated. A similar structural shift appears across global AI infrastructure fragmentation, where control layers determine strategic leverage.

Funding Signals: Investors Are Pricing Context as Infrastructure

Glean’s funding trajectory reflects investor conviction around this category.

- February 2025 — ~ $2.2B valuation

- September 2025 — ~ $4.6B valuation

- February 2026 — $150M Series F led by Wellington Management at ~ $7.2B

Investors include Altimeter, DST Global, Sequoia, Kleiner Perkins and others. The company crossed $100M ARR in under three years while maintaining capital efficiency relative to compute-heavy model companies.

This aligns with a broader infrastructure portfolio logic: capability production, context coordination, and workflow execution as distinct layers. Recent analysis shows AI capital repricing toward infrastructure duration, reinforcing investor focus on coordination layers.

Glean sits at the coordination layer, where leverage compounds as AI usage increases.

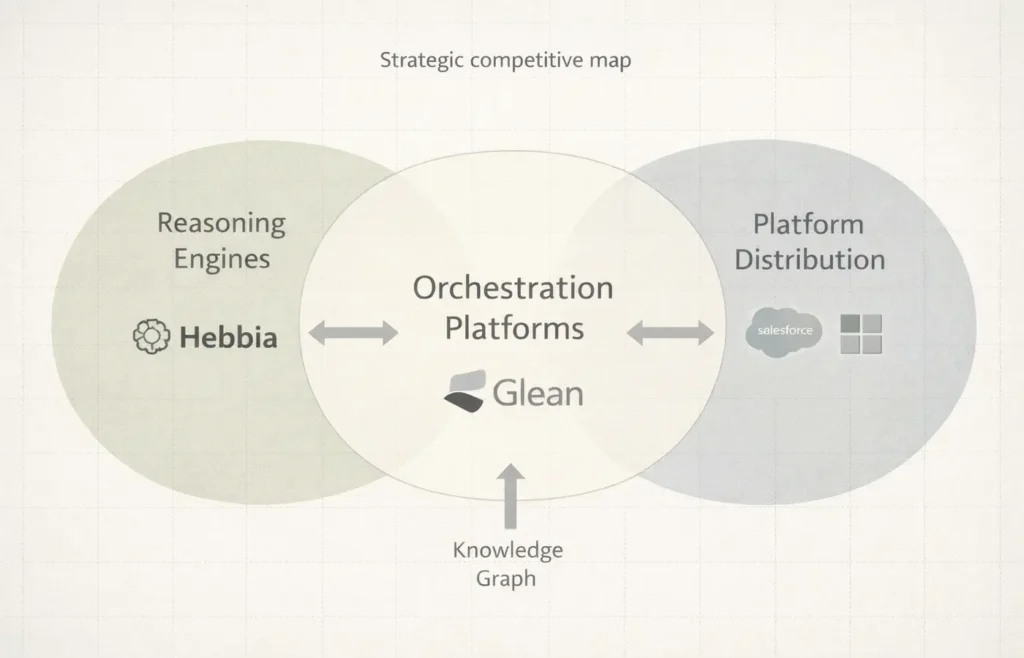

Competitive Landscape: Breadth vs Reasoning vs Distribution

The category is forming around three architectural approaches:

- Horizontal orchestration platforms prioritizing integration breadth

- Deep reasoning engines focused on complex workflows

- Platform incumbents embedding assistants into productivity suites

Glean represents the orchestration approach.

Glean vs Hebbia: Breadth vs Deep Reasoning

While orchestration platforms such as Glean prioritize cross-stack visibility, companies like Hebbia focus on analytical depth inside high-value workflows. Hebbia emphasizes reasoning over documents, while Glean emphasizes contextual continuity across tools and teams.

The distinction reflects parallel infrastructure bets: systems that understand organizational context versus systems that enhance cognitive depth. Enterprise deployments increasingly require both, suggesting layered coexistence rather than winner-take-all outcomes.

Infrastructure layers often occupy this ambiguous position during early category formation.

The Moat: Permission Graph + Workflow Graph

Context infrastructure relies on graph depth rather than feature depth.

Glean’s defensibility emerges from three reinforcing layers:

- Permission graph defining access boundaries

- Usage graph capturing knowledge flow

- Workflow graph revealing where intelligence is applied

As these graphs converge, replacement costs increase because the platform encodes organizational behavior rather than storing information.

Infrastructure moats form when systems sit inside decision pathways.

Risks: Platform Absorption and Model-Native Retrieval

The category’s risks are structural.

Productivity platforms could expand native indexing capabilities. Models may improve direct reasoning over enterprise data. Connector functionality risks commoditization.

Platform Incumbent Risk: The Copilot Layer Expansion

The most significant pressure comes from platforms embedding native context layers directly into productivity ecosystems. Offerings such as Microsoft Copilot combine distribution, data proximity, and model integration, potentially compressing independent orchestration layers by bundling retrieval into existing workflows.

This creates a structural tension between neutrality and distribution. Independent context platforms offer interoperability across models, while incumbents offer frictionless adoption.

Long-term winners must evolve beyond retrieval into runtime coordination, governance, and agent routing. The invisible layer must become operational infrastructure.

Forward Signal: Context Will Define Enterprise AI Leaders

Enterprise AI is moving toward a layered architecture where capability, context, and execution evolve independently. Control over context determines how intelligence is applied, measured, and trusted.

Glean’s strategy reflects a broader shift from building smarter models to structuring how intelligence interacts with organizations. As agents proliferate, the system that decides what they know becomes strategically central. Founder strategy is increasingly shaped by AI capital stack design, where coordination layers influence long-term defensibility.

The competitive boundary is shifting upstream toward coordination.

The most important enterprise AI platforms may not generate intelligence. They may determine its visibility.

Over the next three to five years, the context layer is likely to become the default control surface for enterprise agents, shifting competitive leverage away from model providers toward orchestration infrastructure.

Editorial Takeaway

Glean represents a new class of AI infrastructure companies focused on context rather than capability. Its platform illustrates how enterprise AI is reorganizing around orchestration layers that sit between knowledge and action.

If the current trajectory holds, the invisible infrastructure that routes context could accumulate more durable leverage than the models it serves.

Enterprise AI is no longer just a model race. It is becoming a competition to structure organizational awareness.

And that competition is only beginning.

Research Context: Synthesizes company disclosures, venture funding data, enterprise deployment trends, infrastructure research on context orchestration, and category positioning across AI middleware, agent infrastructure, and enterprise knowledge platforms.

Editorial Note: This article reflects independent TechFront360 analysis of the emerging context infrastructure layer shaping enterprise AI architecture, investment allocation, and competitive dynamics.