Aetherflux isn’t just building in space. It is attempting to move AI infrastructure off Earth entirely.

Aetherflux, the space infrastructure startup founded by Robinhood co-founder Baiju Bhatt, is reportedly in talks to raise $250–350 million at a ~$2 billion valuation — signaling a radical shift in how AI compute could be powered, deployed, and scaled in the coming decade. What began as an experiment in space-based solar power is rapidly evolving into something far more ambitious: orbital data centers designed to bypass Earth’s most fundamental constraint — energy.

This is not an incremental infrastructure upgrade. It is a redefinition of where computation happens.

The Core Thesis: AI Doesn’t Belong on Earth Anymore

The current AI boom is not constrained by models. It is constrained by infrastructure.

- power availability

- cooling capacity

- land constraints

- permitting delays

- grid instability

Data centers now take 5–8 years to build at scale, while AI demand is compounding far faster — a constraint already visible across global infrastructure systems as explored in Nscale Raises $2B — The Compute Backbone Powering AI’s Next Phase.

Aetherflux’s thesis is simple:

Move compute to where energy is abundant.

That place is orbit.

In low-Earth orbit (LEO):

- solar energy is continuous

- cooling is effectively free (via vacuum radiation)

- land constraints disappear

- power generation is not grid-limited

Instead of transmitting energy down to Earth — the original vision — Aetherflux is flipping the model:

Put the compute next to the power.

From Space Solar Power to Orbital Data Centers

Aetherflux did not start as a compute company.

Its original mission was space-based solar power (SBSP) — harvesting solar energy in orbit and beaming it to Earth via lasers.

That idea has existed since 1968.

It has never worked economically.

The reason is structural:

- transmission losses

- massive ground infrastructure (rectennas)

- extreme launch costs

So Aetherflux pivoted.

Not away from energy — but toward using energy in orbit instead of transporting it.

The result is what the company internally frames as:

“Galactic Brain” — a constellation of orbital AI data centers

This is the real product.

How the System Works

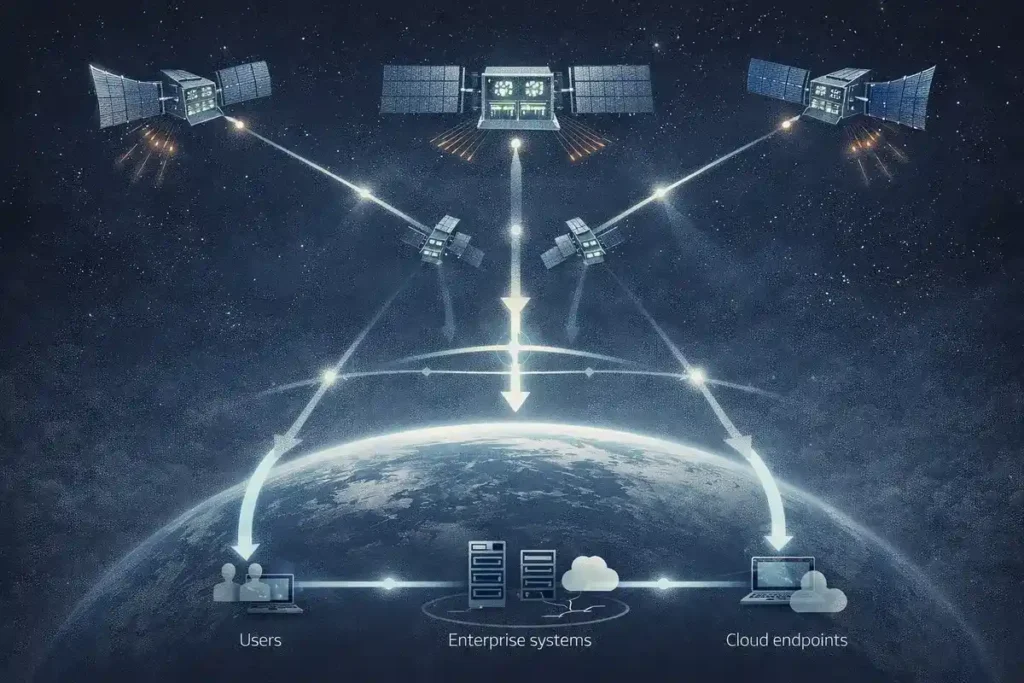

Aetherflux’s architecture is built around large, interconnected orbital compute nodes rather than thousands of small satellites.

Each satellite includes:

- solar arrays (~1,000 sq ft)

- radiator panels (~500 sq ft)

- multiple GPUs (clustered per node)

- laser communication systems

A single Falcon 9 launch could deploy ~30 satellites, forming a tightly coupled compute layer in orbit.

Key design philosophy:

- fewer satellites

- higher compute density per node

- tighter interconnection

This directly contrasts with competitors like Starlink-style distributed systems.

The Competitive Battlefield: Orbital Compute Is Becoming a Category

Aetherflux is not alone. A new category is forming rapidly.

Starcloud (Direct Startup Rival)

- Already launched GPU-enabled satellite (Nov 2025)

- Ran AI models in orbit

- Targeting hyperscale clusters by 2027

- Strategy: many small satellites (Starlink-like)

Google (Project Suncatcher)

- TPU-powered orbital compute

- First satellites expected 2027

- Focus: internal AI workloads (Gemini)

SpaceX

- Integrating compute into Starlink V3

- Potential massive distributed AI layer

- Advantage: lowest launch cost globally

Blue Origin

- Quietly developing orbital compute infrastructure

- Long-term gigawatt-scale ambition

Axiom Space

- ISS-based data center modules

- Hybrid model (station-hosted compute)

Aetherflux’s Positioning

Aetherflux differentiates itself through:

- hybrid DNA (power + compute)

- laser transmission expertise

- clustered compute architecture

- faster deployment cycles (satellite refresh model)

Most importantly:

It is one of the few pure-play bets on orbital AI infrastructure, a layer emerging alongside broader fragmentation in global AI infrastructure control as outlined in The Global AI Map Is Fragmenting — Who Controls the Next Layer of Intelligence.

Why Investors Are Betting Now

The timing is not accidental.

Three macro forces are converging:

1. AI Power Demand Explosion

AI compute demand is outpacing grid expansion globally.

2. Data Center Bottlenecks

Land, permits, and power availability are slowing deployment.

3. Launch Cost Decline

SpaceX has reduced launch costs ~20× over two decades.

This creates a narrow but critical window where:

Orbital compute becomes plausible — but not yet proven.

The Economics Problem: Launch Costs Still Break the Model

Despite the vision, the economics are brutal.

Current Costs (2026):

- Falcon 9: ~$2,500–3,600/kg

- Internal cost: ~$600–1,000/kg

Required for viability:

- ~$200/kg (or lower)

This gap is existential.

Example:

- Launching 1,000 kg = ~$2.5M

- Real systems require hundreds of tons

- Hardware lifespan: ~5 years

This creates a cycle of:

launch → degrade → replace → repeat

Which inflates total cost of ownership dramatically.

Why Starship Changes Everything

The entire sector depends on one variable:

Starship reaching <$100–200/kg

If that happens:

- orbital compute becomes cost-competitive

- large-scale deployment accelerates

- energy advantage dominates

If it doesn’t:

- orbital data centers remain uneconomical

- confined to niche use cases

This is why the entire category is effectively:

a leveraged bet on launch economics

The Real Advantage: Energy, Not Compute

The breakthrough is not GPUs in space.

It is energy.

On Earth:

- power is scarce

- cooling is expensive

- infrastructure is slow

In orbit:

- energy is abundant

- cooling is passive

- infrastructure scales with launches

This flips the cost structure over time.

From:

compute constrained by power

To:

power enabling unlimited compute, reinforcing the broader shift toward infrastructure-first AI systems described in The AI Infrastructure Split — Who Controls the Next Layer of AI.

The Constraint Layer

Even beyond launch costs, multiple risks remain:

- radiation degrading hardware

- latency for Earth-based workloads

- orbital debris and regulation

- data sovereignty in space

- capital intensity at scale

Most critically:

the system must outperform terrestrial data centers on cost

Not just match them.

Why This Matters Now

The AI industry is approaching a hard limit.

Not in intelligence.

In infrastructure.

The next phase of AI will not be defined by better models.

It will be defined by:

- where compute happens

- how it is powered

- who controls it

Aetherflux is entering that layer — aligning with the broader transition toward compute-layer dominance seen across emerging infrastructure players like Neysa’s Compute Backbone for AI Infrastructure.

The Structural Shift: From Data Centers to Compute Environments

The transition underway is deeper than cloud vs on-premise.

It is:

- Earth → Orbit

- Grid → Solar

- Cooling systems → Vacuum physics

This is not evolution.

It is relocation.

What Aetherflux Is Actually Building

Aetherflux is not just building satellites.

It is attempting to build:

an orbital compute layer for artificial intelligence

Where:

- energy is native

- compute is colocated with power

- infrastructure is decoupled from Earth

This is a new layer in the AI stack.

Editorial Close

The idea of space-based power has existed for over 50 years.

It has never worked.

Not because it was wrong.

Because it was early.

What has changed is not the idea.

It is the context:

- AI demand

- cheaper launches

- compute intensity

- energy scarcity

Aetherflux is not trying to solve space power.

It is using space to solve AI.

And if it works, the question will no longer be:

Where do we build data centers?

It will be:

Why are they still on Earth?

Research Context

Based on TechCrunch, WSJ reporting, SBSP historical research, launch cost models, and comparative analysis of orbital compute startups including Starcloud, Google, and SpaceX.

Editorial Note

This article reflects independent analysis of publicly available information and structural shifts in AI infrastructure and space-based compute systems.