Inside the divergence between compute builders and orchestration platforms

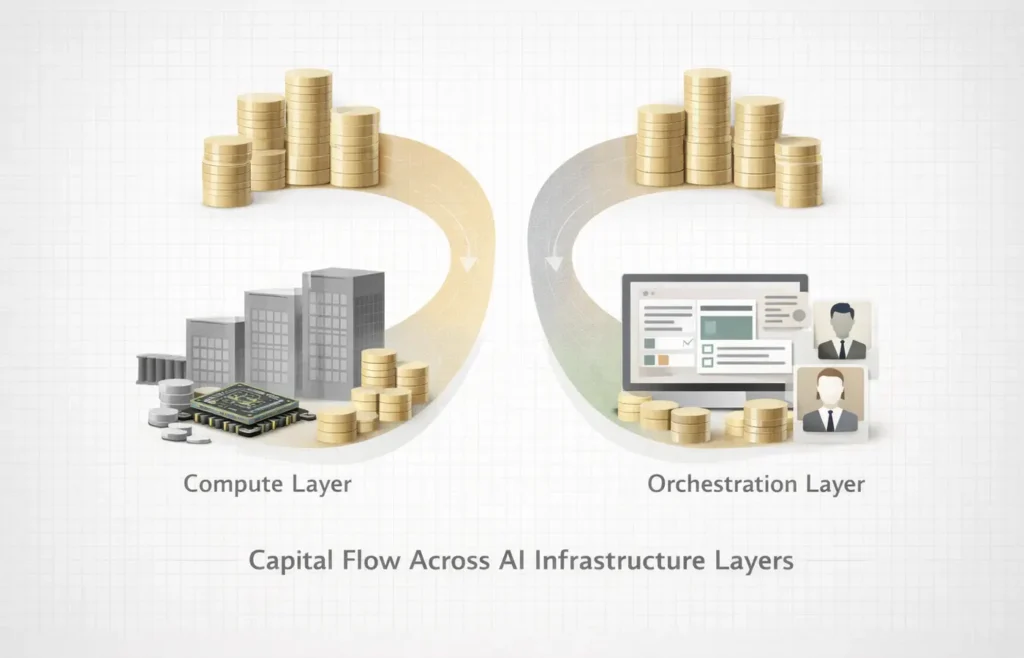

Over the past twelve months, more than $40B has flowed into AI infrastructure across model companies, data centers, orchestration platforms, and agent tooling. That capital is not expanding one category. It is separating the stack into layers with different constraints, timelines, and leverage points.

AI infrastructure is increasingly organizing around two economic systems: capability production and capability coordination. Frontier model companies secure compute supply, energy, and financing scale, while orchestration platforms embed intelligence inside enterprise workflows where reliability, governance, and switching costs compound over time.

Both layers are infrastructure. They follow different physics. This divergence emerges from constraint asymmetry: compute-layer progress is limited by physical inputs such as energy, chips, and financing cycles, while orchestration-layer progress is constrained primarily by enterprise adoption speed and integration complexity. When constraints differ, capital allocation diverges, producing layered markets rather than unified ones.

This shift accelerated in recent quarters as infrastructure funding cycles, enterprise deployments, and agent adoption timelines began converging for the first time rather than unfolding sequentially.

Two Layers, Different Physics

The first layer centers on capability production. Frontier model developers, chip suppliers, and hyperscale data center operators operate inside an environment where progress is constrained by energy availability, GPU supply, and financing scale.

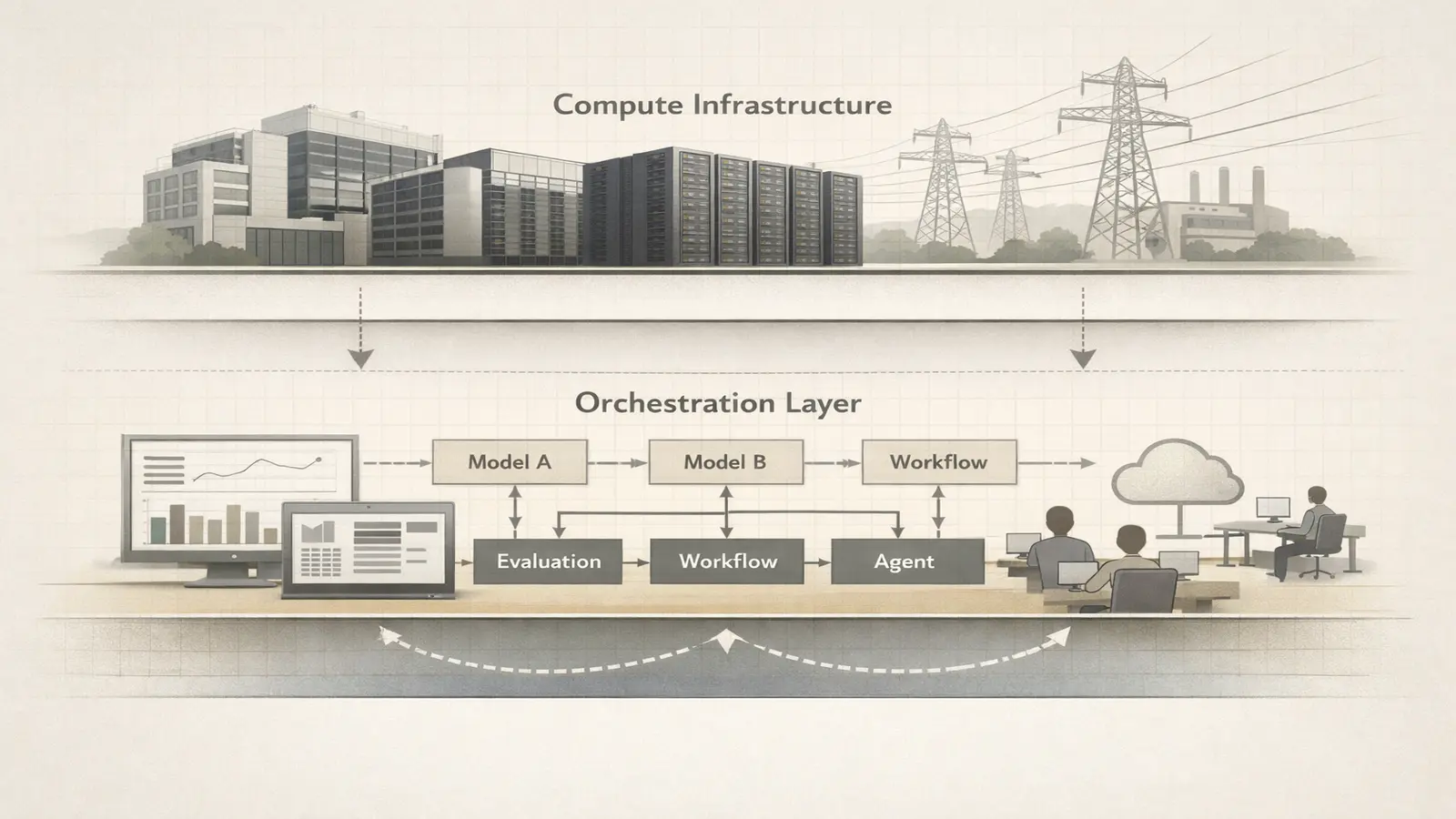

The second layer sits closer to enterprise deployment. Routing platforms, evaluation tooling, vertical agents, and workflow infrastructure coordinate how intelligence moves through organizations rather than how it is produced.

Both layers are necessary. Their economics are incompatible.

Compute-layer companies routinely operate with multi-billion capital requirements and 5–10 year infrastructure horizons, while orchestration companies often reach enterprise deployment with teams under 200 employees and funding below $200M. The divergence is structural rather than cyclical.

Where Startups Are Positioning

Recent signals reinforce the split.

Model-adjacent companies such as Anthropic, Mistral AI, and emerging physical AI builders continue raising capital tied to compute access and long-term infrastructure commitments. Meanwhile, orchestration-focused platforms including Portkey, Harvey, and enterprise agent frameworks scale through enterprise embedment rather than capacity ownership.

Anthropic’s hyperscaler partnerships illustrate compute-layer economics, where supply certainty defines competitive positioning, while platforms like Harvey embed directly into workflows where switching costs compound faster than model capability improves.

Model-layer companies now train systems costing hundreds of millions per training cycle, while orchestration platforms process billions of requests without owning underlying compute. The difference defines how value accumulates across the stack.

Competition is moving from category to architecture. This mirrors how enterprise AI infrastructure competition is shifting from model capability toward deployment control.

Capital Is Reinforcing the Separation

Venture allocation patterns increasingly mirror this structural split.

Infrastructure vehicles — including Lightspeed, Andreessen Horowitz, Thrive Capital, and General Catalyst — are deploying capital into both layers but underwriting different outcomes. Compute-layer investments resemble industrial financing focused on duration and supply certainty. Orchestration investments resemble platform expansion driven by distribution and switching costs.

Late-stage infrastructure rounds increasingly exceed $500M, while the median vertical AI round remains closer to $20–50M, reinforcing the bifurcation between capacity financing and workflow adoption.

Capital is no longer flowing into AI broadly. It is flowing into infrastructure roles with distinct time horizons, risk profiles, and exit dynamics. This separation suggests AI infrastructure is evolving into a layered ecosystem rather than a single market category.

The primary uncertainty is whether model providers vertically integrate orchestration capabilities, compressing the independent coordination layer before it fully matures.

Recent funding signals suggest the week AI capital repriced itself as infrastructure duration replaced application velocity.

Recent Funding Signals: Infrastructure Capital Is Targeting Both Layers

Funding activity between mid-February and late February reinforced the emerging split across AI infrastructure. Several rounds highlighted how investors are allocating capital differently across compute builders, orchestration platforms, and vertical infrastructure.

Rather than a single AI funding wave, deal structure suggests parallel financing tracks tied to infrastructure duration, enterprise embedment, and supply certainty.

Representative funding patterns across AI infrastructure layers:

| Startup | Layer | Raise | Investors | Valuation Signal | Strategic Focus |

|---|---|---|---|---|---|

| Physical AI / robotics infra builders | Compute-adjacent | $100M+ typical late-stage rounds | Lightspeed, Thrive, corporate hyperscalers | Valuations expanding on infrastructure multiples | Hardware + model integration |

| Enterprise orchestration platforms (legal AI, routing, evaluation) | Coordination | $20–80M growth rounds | General Catalyst, a16z, Sequoia scouts | Faster step-ups driven by workflow embedment | Agent infrastructure + governance |

| AI control plane startups | Runtime layer | $10–30M | Infrastructure-focused funds | Early but strategic pricing | Routing, evaluation, cost control |

Named deal signals during the period reinforced the layered thesis. Orchestration-focused legal AI platform Harvey continued attracting growth-stage investor attention following its General Catalyst-led backing, while infrastructure orchestration startups such as Portkey signaled early capital formation around routing and control layers. In parallel, physical AI and robotics infrastructure builders secured larger late-stage commitments tied to compute access and hardware integration, highlighting the capital duration gap between capability production and coordination layers.

Across these deals, investors appear less focused on category leadership and more focused on stack positioning. Compute-layer funding emphasizes duration and supply access, while orchestration funding emphasizes switching costs and enterprise dependency.

This pattern suggests infrastructure valuation frameworks are beginning to diverge alongside technical architecture.

Early evidence suggests infrastructure capital is beginning to price coordination leverage separately from capability scale.

Enterprise Adoption Is Accelerating the Orchestration Layer

Enterprise behavior explains why the second layer is expanding quickly.

Organizations rarely run a single model provider. Instead, they operate heterogeneous environments shaped by cost, latency, compliance, and task specialization. That complexity creates demand for routing, governance, and evaluation layers that sit between models and applications.

Many enterprise environments now operate 5–15 model providers simultaneously, accelerating demand for orchestration infrastructure that reduces vendor dependency while improving reliability.

In practice, enterprise AI teams increasingly spend more time managing routing policies, evaluation pipelines, and cost controls than selecting a single model provider, shifting operational complexity from model choice toward infrastructure coordination.

Infrastructure is shifting from ownership to coordination. This coordination layer is increasingly formalized through AI control planes emerging as governance infrastructure.

The Emerging Stack Architecture

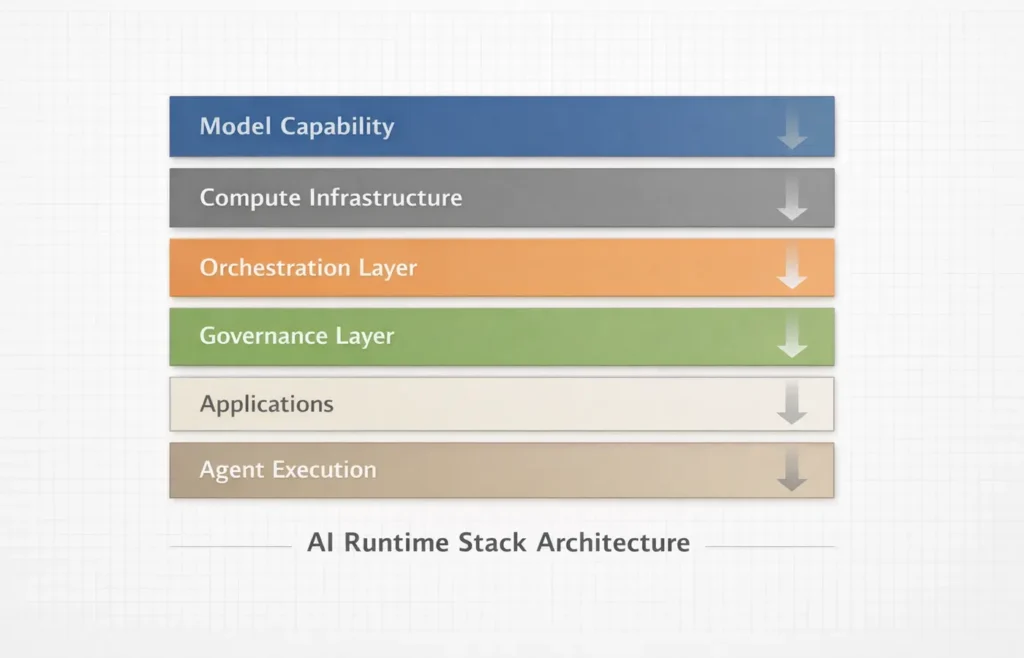

The practical AI stack is thickening:

- Model capability

- Compute infrastructure

- Orchestration layer

- Governance layer

- Application layer

- Agent execution

Control points increasingly sit in the middle rather than at the top.

This architecture is already visible across enterprise deployments, where orchestration platforms determine which models are used, how costs are managed, and how agents operate under policy constraints. The center of gravity is moving toward runtime infrastructure, where routing, evaluation, and policy shape real usage more than raw model capability.

This layered architecture is also visible in the fragmenting global AI infrastructure landscape shaped by sovereign compute strategies.

Why Venture Capital Is Watching This Closely

For investors, the split introduces a new portfolio logic.

Compute-layer winners may capture outsized value but require unprecedented capital and longer timelines. Orchestration winners may scale faster, embed deeply, and generate earlier recurring revenue through workflow dependency.

The risk profiles differ. The strategic importance does not.

Infrastructure investors increasingly hold positions across both layers, treating them as interdependent rather than competing categories. The AI stack is becoming a coordinated system rather than a linear market, where value accrues at multiple control points simultaneously.

The Contrarian Insight: Coordination May Outscale Capability

Public narratives still frame AI competition around models. Deployment realities suggest coordination layers could accumulate leverage more predictably.

Capability spreads horizontally as models improve across providers. Embedment compounds vertically as orchestration layers sit in the path of enterprise usage.

In that environment, control over traffic may matter more than ownership of intelligence.

The companies shaping how intelligence is routed, evaluated, and governed influence demand without controlling supply. Historically, infrastructure layers positioned between production and consumption accumulate the most durable leverage.

The Forward Signal

Over the next 24–36 months, enterprise AI traffic is likely to concentrate around orchestration layers that sit between multiple model providers and production workflows, shifting leverage from model ownership toward deployment control.

Compute remains the constraint. Coordination becomes the multiplier. Founder strategy is beginning to mirror this shift, as seen in analyses of AI capital stack design shaping infrastructure competition.

AI infrastructure is evolving from a race to build intelligence into a competition to structure its distribution.

The infrastructure narrative is no longer singular. It is layered.

The week did not produce a breakthrough model announcement. It clarified where the next competitive boundaries are forming.

Editorial Takeaway

AI infrastructure is entering a phase where production and deployment evolve on different clocks. Capital, enterprise adoption, and startup positioning are reinforcing that divergence rather than smoothing it.

The most important companies of this cycle may not be those that build intelligence fastest, but those that determine how intelligence moves.

Infrastructure layers that coordinate usage tend to persist longer than the capabilities they orchestrate, suggesting AI’s next leaders will be defined less by intelligence creation and more by control over intelligence flow.

Research Context: Synthesizes funding signals, enterprise deployment patterns, venture allocation trends, and positioning across frontier AI startups and infrastructure platforms.

Editorial Note: This article reflects independent analysis of structural shifts shaping the global AI infrastructure landscape.