Conntour isn’t building surveillance software. It is redefining how machines interpret the physical world.

Conntour, a vision-language AI startup operating at the intersection of computer vision, natural language understanding, and real-time systems, has raised $7 million from General Catalyst, Y Combinator, SV Angel, and Liquid 2 Ventures — positioning itself within a critical and underbuilt layer of the AI stack: transforming continuous video streams into structured, queryable intelligence systems. While much of the current AI cycle remains concentrated on text, code, and copilots, Conntour reflects a deeper structural transition toward systems capable of interpreting and operationalizing the physical world in real time.

This is not an incremental upgrade to surveillance systems. It is a shift from observation to interpretation.

The Real Problem: Video Exists — But It Is Not Usable

Modern enterprises operate within environments saturated with cameras, generating vast and continuous streams of visual data across warehouses, airports, retail stores, logistics networks, and urban infrastructure, all of which should theoretically provide comprehensive visibility into real-world operations.

In practice, that visibility does not translate into intelligence.

Because video, in its current form, is not an active system of understanding. It is a passive archive.

- stored, not interpreted

- recorded, not queried

- accessible, but operationally unusable

Security and operations teams remain dependent on manual review workflows, predefined detection rules, and reactive processes that begin only after an event has occurred, which fundamentally limits the ability to extract timely and actionable insight.

The bottleneck is no longer data collection.

It is data accessibility and interpretability at scale.

The Structural Shift: From Monitoring to Querying Reality

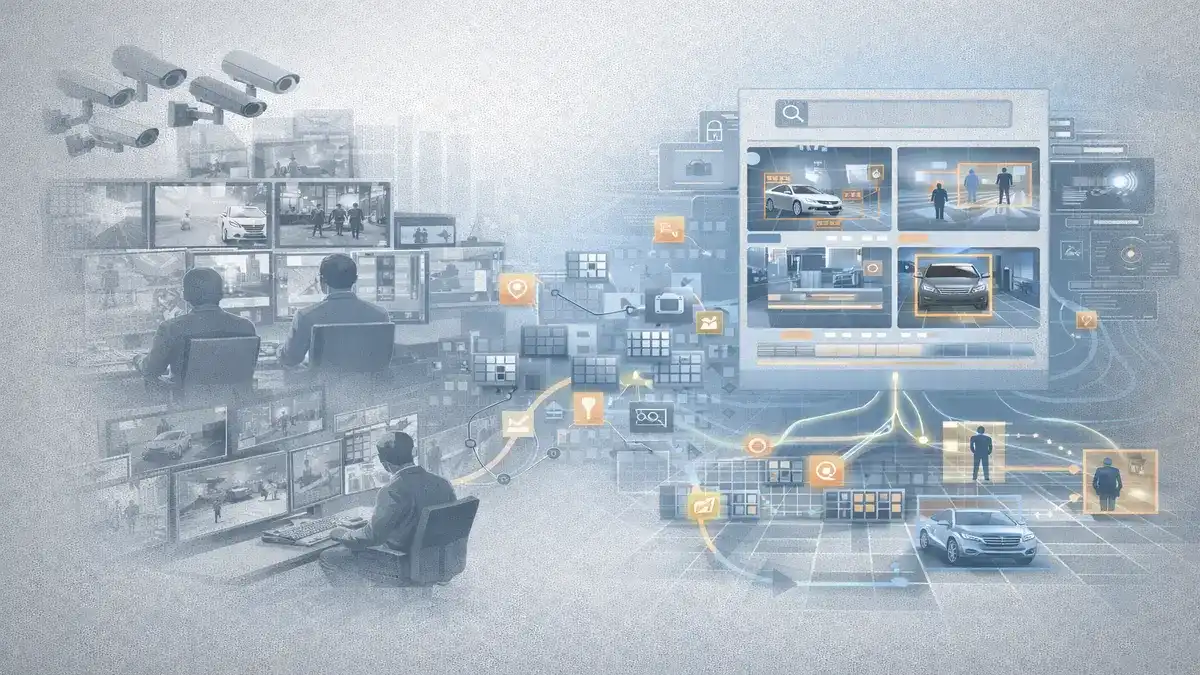

Conntour’s core insight reframes the role of video entirely by treating it not as footage to be reviewed, but as a system to be queried.

Instead of relying on rigid detection rules or manual inspection, users can interact with video using natural language queries that correspond directly to real-world behaviors and scenarios.

- “Show me someone leaving a bag unattended”

- “Find all delivery trucks that stopped for more than 10 minutes”

- “Identify instances of unauthorized access to restricted areas”

The system translates these queries into semantic search operations across both live and historical video streams, effectively transforming passive visual data into an interactive intelligence layer.

This changes the interaction model at a fundamental level:

- watching → searching

- detecting → understanding

- reacting → querying

Video is no longer something that is observed.

It becomes something that can be interrogated as a system of record, a shift aligned with broader execution-layer transformations seen in AI Is Replacing Dental Front Desks — Inside Patientdesk’s Execution-Layer Bet.

Why This Is Hard: Vision, Language, and Time as a Unified System

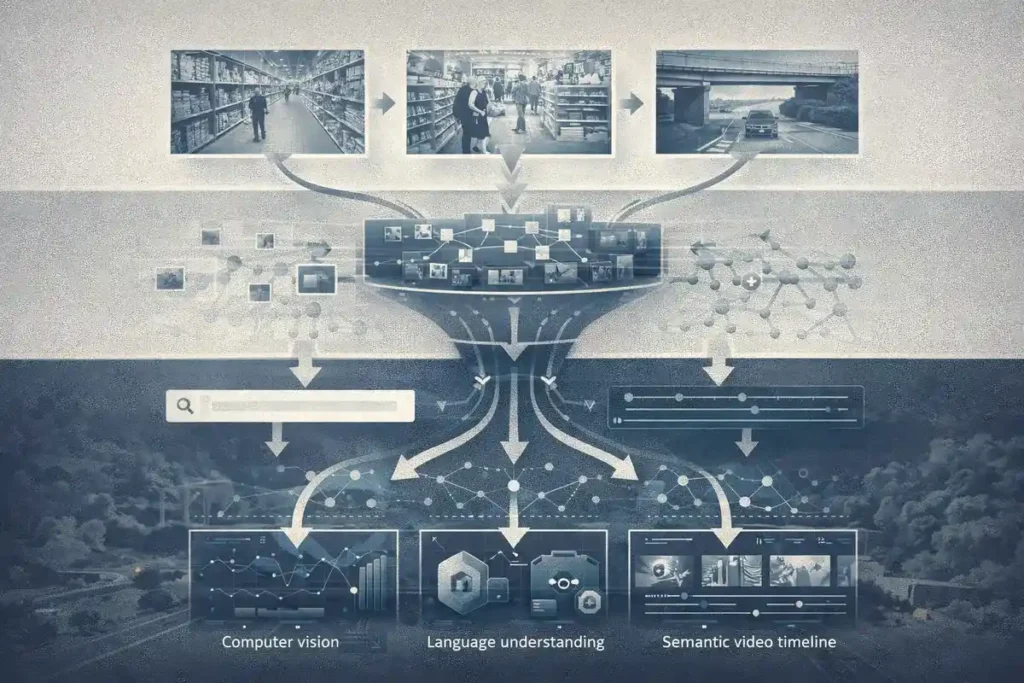

Unlike text-based AI systems, video intelligence requires the simultaneous integration of multiple complex domains, each of which introduces its own set of technical and computational challenges.

1. Visual Understanding

Accurately identifying objects, people, and environments across noisy, low-resolution, and dynamically changing footage.

2. Language Mapping

Translating natural language queries into structured representations that can be executed across diverse visual contexts.

3. Temporal Reasoning

Understanding sequences of events over time rather than isolated frames, which is essential for interpreting behavior and intent.

Most legacy systems fail because they rely on predefined rules operating in static conditions, such as motion detection or fixed object recognition, which do not generalize to real-world variability.

Reality is not rule-based.

It is contextual, dynamic, and continuously evolving.

Conntour’s approach reflects a shift toward semantic video understanding, where systems interpret intent rather than simply detect patterns — mirroring the broader transition toward expertise-driven AI systems described in The Next AI Breakthrough Is Expertise, Not Just Models.

The Architecture Insight: Efficiency Becomes the Product

The primary constraint in this category is not accuracy alone, but the ability to deliver intelligence efficiently across large-scale deployments where thousands of video streams must be processed in real time.

This is where Conntour’s architecture becomes a defining differentiator.

Rather than applying uniform computation across all inputs, the system dynamically allocates compute resources based on the complexity and requirements of each query, optimizing both performance and cost.

- dynamic model selection per query

- adaptive compute allocation

- ability to process approximately 50 camera feeds on a single consumer GPU

This introduces a critical principle for next-generation AI systems:

The system must decide not only what to compute, but how much computation is necessary.

At scale, efficiency is no longer a secondary optimization.

It becomes the product itself.

The Market Layer: A $50B System Without Intelligence

The global video surveillance market exceeds $50 billion, yet the underlying interaction paradigm has remained largely unchanged, continuing to rely on storage, playback, and rule-based alert systems that resemble legacy architectures rather than modern intelligence platforms.

Most existing systems function as:

- digital recording systems

- static monitoring dashboards

- fragmented alert mechanisms

This creates a structural imbalance.

Video exists at scale.

Intelligence does not.

While companies such as Verkada and Rhombus have advanced hardware and cloud integration, the query layer — the ability to interact with video as structured data — remains underdeveloped.

Conntour is not competing on hardware.

It is competing on how video is understood and accessed — a positioning consistent with infrastructure-layer plays that sit above existing systems, similar to Portkey Is Building the Control Plane for AI Systems.

The Strategic Position: Software Layer Above Infrastructure

One of Conntour’s most important strategic decisions is its hardware-agnostic approach, which allows it to integrate with existing camera infrastructure rather than requiring replacement or significant capital investment.

The system:

- integrates with existing deployments

- supports on-premise, cloud, and hybrid environments

- scales without dependency on proprietary hardware

This positions Conntour as a software intelligence layer that sits above the physical infrastructure stack.

Not replacing systems.

Controlling how they are used — reinforcing the broader control-layer thesis outlined in The AI Infrastructure Split — Who Controls the Next Layer of AI.

The Ethics Layer: Where Capability Meets Constraint

Surveillance AI operates within one of the most sensitive and heavily scrutinized domains in technology, where capability alone is insufficient to drive adoption without corresponding trust, governance, and control mechanisms.

Conntour’s emphasis on selective deployment and customer alignment reflects a structural reality:

This category is constrained not only by technical challenges, but by ethical boundaries.

- misuse risk is significant

- regulatory pressure is increasing

- trust directly determines distribution

In this market, growth is not purely a function of capability.

It is a function of controlled deployment and institutional trust.

The Founder Signal: Speed Reflects Structural Clarity

The $7 million round closing within 72 hours signals more than investor enthusiasm; it reflects a convergence of clear narrative positioning, early product validation, and recognition that this category represents a foundational shift in how AI interacts with the physical world.

Investors such as General Catalyst and Y Combinator are not simply backing a product.

They are aligning with a broader movement toward operational AI systems embedded directly into real-world environments.

Capital is shifting accordingly.

Not toward models alone.

Toward systems that execute.

The Structural Shift: From Video Storage to Video Intelligence

Conntour represents a broader pattern in how data systems evolve, transitioning from passive collection layers to active intelligence systems capable of real-time interpretation and interaction.

Legacy model:

- collect data

- store data

- review manually

Emerging model:

- collect data

- index semantically

- query dynamically

This transformation has already occurred in adjacent domains:

- text → search engines

- data → analytics platforms

- code → copilots

Now it is occurring in video.

The Constraint Layer

The opportunity is substantial, but the constraints are deeply structural and difficult to resolve at scale.

- inconsistent and low-quality video input

- ambiguity in natural language queries

- high computational requirements

- regulatory and ethical limitations

- requirement for near-perfect reliability

Most critically:

False negatives are unacceptable.

In this domain, missing an event is not a usability issue.

It is a system failure.

What Conntour Is Actually Building

Conntour is not building a surveillance platform in the traditional sense.

It is constructing a query layer for the physical world, where visual data is transformed into structured, searchable intelligence that can be accessed in real time and used to inform operational decisions.

In this system:

- video becomes data

- data becomes searchable

- search becomes intelligence

This is not about cameras.

It is about understanding reality at scale.

Editorial Close

The first phase of AI made text searchable.

The second phase made knowledge generative.

The next phase is making the physical world queryable.

Because the fundamental shift is not the creation of more data, but the ability to interact with reality itself through systems that can interpret, respond, and act in real time.

Conntour is building within that layer.

Quietly.

But structurally.

Research Context

Based on TechCrunch reporting, company disclosures, investor participation, and analysis of emerging trends in vision-language models, enterprise AI infrastructure, and video intelligence systems.

Editorial Note

This analysis reflects independent interpretation of publicly available information and broader structural shifts in AI-driven operational systems.