Deccan AI isn’t building models. It is scaling the layer that makes them usable.

Deccan AI, a post-training infrastructure startup operating across data generation, evaluation, and reinforcement learning, has raised $25 million in a Series A led by A91 Partners — positioning itself inside a critical but underbuilt layer of the AI stack: the systems that refine, validate, and operationalize models after they are trained. While most attention remains concentrated on frontier model development, Deccan represents a structural shift toward the infrastructure required to make those models reliable, consistent, and usable inside production environments.

This is not a peripheral layer. It is becoming a necessary one. Because the real bottleneck in AI is no longer training — it is what happens after training ends.

The Missing Layer: AI Doesn’t Break at Training — It Breaks After

For years, the dominant narrative in AI has centered on model creation, with progress measured through larger datasets, better architectures, and increased compute. That phase is now maturing, and the constraint has clearly moved downstream into environments where models interact with real-world systems and unpredictable inputs.

Models today can generate, reason, and simulate with impressive capability, but they still fail when deployed into production contexts where precision, consistency, and reliability are non-negotiable. Hallucinations persist, outputs lack consistency, domain accuracy breaks under pressure, and real-world environments introduce edge cases that models are not inherently designed to handle.

This is where post-training begins. And where most systems collapse.

Deccan AI is built specifically for this layer — not to create intelligence, but to stabilize it.

From Data Labeling to Post-Training Infrastructure

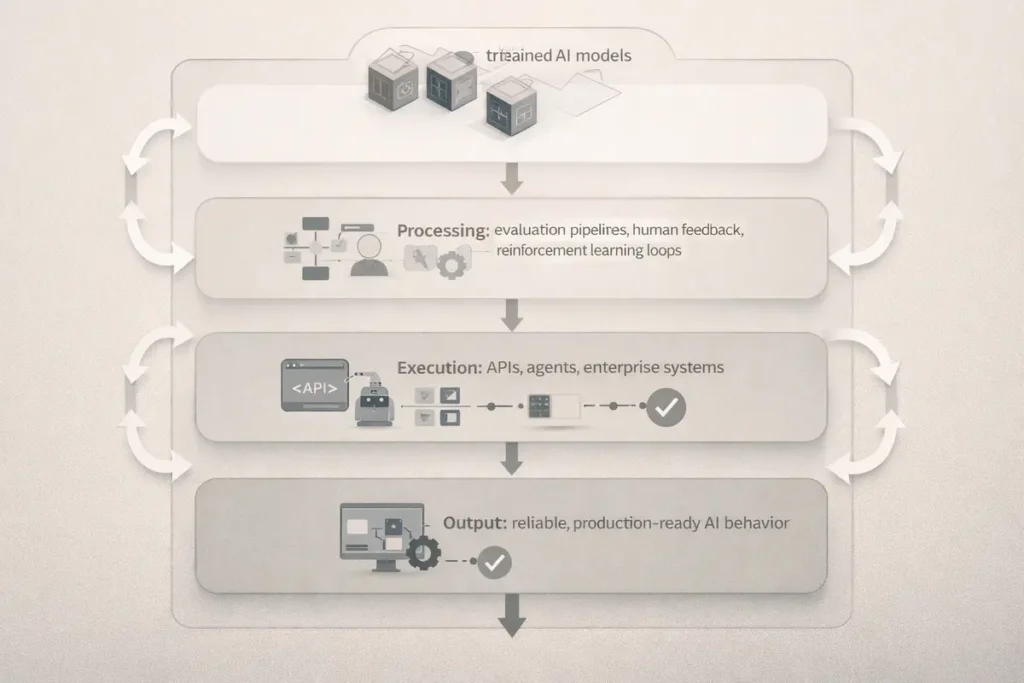

Traditional data-labeling companies operated at the input layer, focusing on preparing datasets for initial training cycles. Deccan operates at a fundamentally different level, targeting the phase where models must be refined, evaluated, and adapted to function reliably in real-world environments.

Instead of preparing data, it works across multiple post-training dimensions:

- data generation for edge-case coverage

- model evaluation under real-world constraints

- reinforcement learning with expert feedback

- tool-use training (APIs, external systems)

- agent capability refinement

This is not preprocessing. It is post-training control.

The shift appears subtle on the surface, but it is structurally significant, because once models are deployed, value no longer depends on intelligence alone — it depends on reliability under real conditions, a transition increasingly visible across enterprise AI systems where execution layers are replacing surface-level capability, as seen in AI Is Replacing Dental Front Desks — Inside Patientdesk’s Execution-Layer Bet.

The Product Layer: Turning Human Feedback Into System Behavior

At the core of Deccan’s system is a simple but powerful principle: AI improves fastest when feedback is structured, scalable, and continuous across real-world usage cycles.

The company operationalizes this through tightly integrated systems that include expert-led evaluation pipelines, reinforcement learning environments, and continuous feedback loops directly tied to production behavior. Its evaluation suite, Helix, along with its operations automation platform, allows enterprises and labs to move beyond static assessment toward dynamic system refinement.

This effectively transforms human expertise into a persistent training extension layer rather than a one-time annotation process.

Not static input. Continuous shaping.

The Workforce Model: Scaling Intelligence Through Distributed Expertise

Deccan’s infrastructure is not purely technical. It is deeply socio-technical, combining system architecture with large-scale human coordination.

The company operates with approximately 125 employees while orchestrating a network of over 1 million contributors, with 5,000 to 10,000 active participants in a typical month, many of whom are domain experts or hold advanced degrees. This creates a hybrid model where human intelligence is systematically integrated into machine learning workflows.

Most of this workforce is concentrated in India. This is not incidental. It is strategic.

By focusing geographically, Deccan improves quality control, coordination efficiency, and domain specialization while enabling faster turnaround times under tight operational constraints. This stands in contrast to competitors that distribute work across dozens of countries, often trading off consistency for scale.

Less spread. More control.

India’s Role: From Outsourcing Hub to AI Infrastructure Layer

Deccan’s model reflects a broader structural shift in the global AI ecosystem, where India is emerging not as a primary builder of frontier models, but as a critical execution layer within the AI value chain.

This includes training data generation, evaluation pipelines, reinforcement learning workflows, and domain-specific expertise that directly influence how models perform in production environments.

In effect, India is becoming the human infrastructure behind machine intelligence.

Less visible. But deeply embedded.

The Market Shift: From Models to “Picks and Shovels”

The $25 million raise signals more than company growth. It reflects a broader capital reallocation toward infrastructure layers that enable models to function reliably at scale.

AI investment is no longer concentrated solely in foundation models, chips, and compute infrastructure. It is expanding into the layers that make those systems usable, including data infrastructure, evaluation systems, orchestration layers, and post-training services — a capital shift aligned with AI Funding Is Splitting Into Infrastructure and Physical Intelligence Bets.

This is the “picks and shovels” phase of AI, where supporting infrastructure becomes as strategically important as the models themselves.

Competitive Landscape: The Post-Training Arms Race

Deccan is entering a category that is rapidly taking shape, with multiple players attacking different parts of the post-training stack.

Key competitors include Scale AI, Surge AI, Turing, and Mercor, each operating across adjacent but distinct layers of the ecosystem. The competitive landscape is increasingly fragmenting into specialized roles, including labeling platforms, talent marketplaces, evaluation systems, and agent training layers.

Deccan positions itself at the intersection of these functions.

Execution across the full lifecycle.

That positioning matters, because fragmentation creates opportunities for companies that can integrate across layers and deliver end-to-end system reliability — a pattern consistent with broader infrastructure consolidation trends seen in Inside Kluisz.ai: The Startup Rebuilding Cloud Infrastructure for the AI-Native Era.

The Constraint Layer

The opportunity is significant. The risks are equally structural.

Deccan’s model depends on high-quality execution in an environment where tolerance for error is extremely low, while also managing scalability challenges inherent to human-in-the-loop systems. Revenue concentration among a small number of large customers introduces additional exposure, and competition from vertically integrated platforms could compress margins over time.

Most critically, post-training systems are difficult to scale without degrading quality.

And in this category, quality is the product.

Why This Matters Now

This is no longer a niche layer. It is becoming foundational.

Models are already powerful enough for many use cases, but enterprises are increasingly prioritizing reliability over novelty, especially in environments where outputs directly affect operations, revenue, or decision-making. At the same time, agent-based systems require continuous refinement, further increasing the importance of post-training infrastructure.

The next phase of AI will not be defined by better models alone.

It will be defined by better systems around those models.

The Structural Shift: From Training to Lifecycle Infrastructure

AI is entering a new phase where the stack is expanding beyond model creation into full lifecycle management.

Training is reaching scale. Inference is commoditizing. Post-training is emerging as the next bottleneck.

This creates a new hierarchy:

models → systems → infrastructure

Deccan operates in the final layer, where long-term value compounds through control, reliability, and integration — similar to how deeper control layers are emerging across AI systems, as explored in Dash0 Hits $1B — Why AI Observability Is Becoming a Control Layer.

What Deccan AI Is Actually Building

Deccan is not a services company. It is an infrastructure layer that sits between intelligence and execution.

It refines model behavior, enforces quality, operationalizes feedback, and connects AI systems to real-world environments where performance matters.

In effect, it controls the transition.

From capability to usability.

Editorial Close

The AI industry spent the last decade building intelligence.

The next decade will be about making that intelligence usable, reliable, and operational at scale. That shift will not be defined by models alone, but by the systems that validate, refine, and continuously improve them.

Deccan AI is building inside that layer.

Quietly. But structurally.

Research Context

Based on company disclosures, TechCrunch reporting, funding data, and analysis of emerging AI infrastructure layers across post-training, evaluation, and reinforcement learning systems.

Editorial Note

This article reflects independent analysis of publicly available information and broader structural shifts in the AI ecosystem.