Inside the immigrant resilience, first-principles thinking, and strategic bets that transformed Nvidia from a graphics startup into the backbone of modern artificial intelligence.

Artificial intelligence may define the next era of computing, but the systems powering that revolution are not built by software alone. Beneath every large language model, robotics platform, and enterprise AI system lies an immense layer of accelerated computing infrastructure that is rapidly becoming the backbone of the global technology economy.

At the center of that infrastructure sits Nvidia — and the founder who spent more than three decades building it.

Jensen Huang, the co-founder and chief executive of Nvidia, has quietly shaped the architecture of the modern AI industry. As global demand for AI infrastructure accelerates — alongside the massive capital flows reshaping the industry’s investment landscape — Nvidia’s GPUs have become the dominant processors used to train frontier models, operate hyperscale data centers, and power the next generation of intelligent machines.

This shift is unfolding alongside the unprecedented capital expansion of artificial intelligence, where billions of dollars are flowing into the technologies powering the compute layer of the AI stack, a trend explored in our analysis of The $189B AI Funding Surge Is Reshaping the Deep Tech Venture Map.

That position did not emerge overnight.

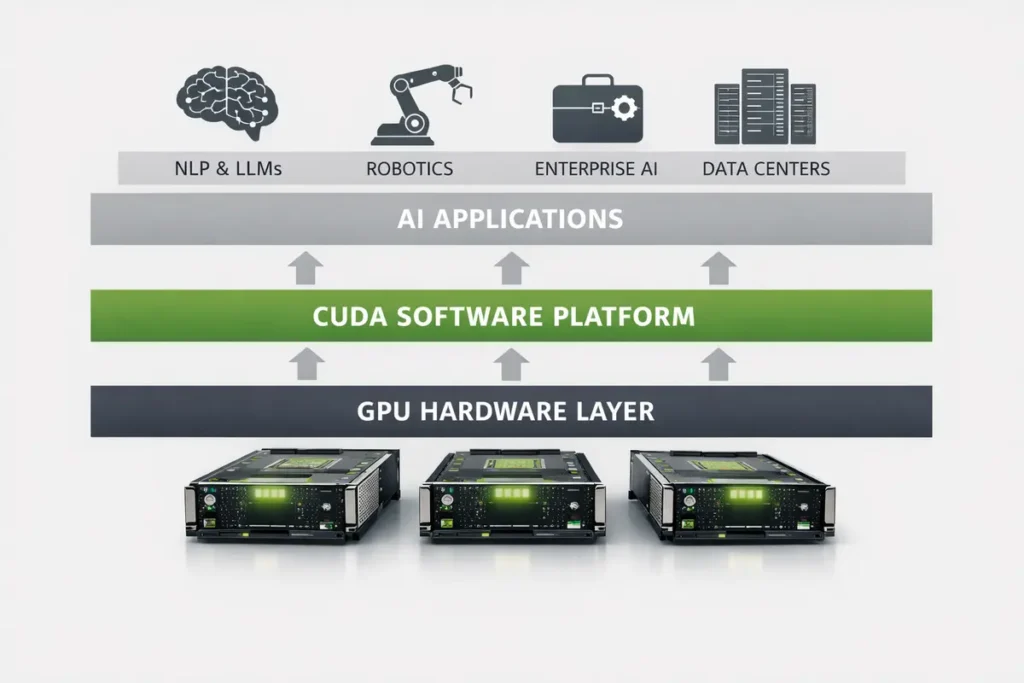

Under Huang’s leadership, Nvidia evolved from a niche graphics chip company into what many analysts now describe as the compute layer of the global AI stack. Today the company’s hardware and software platforms sit beneath nearly every major AI system being developed — from research labs building frontier models to enterprises deploying AI across their operations.

In effect, Huang did not simply build a successful semiconductor company.

He helped build the infrastructure foundation of the AI economy itself.

The Early Life That Shaped the Founder

Jensen Huang’s story begins far from Silicon Valley.

Born Jen-Hsun Huang in 1963 in Tainan, Taiwan, he spent part of his childhood in Thailand before immigrating to the United States at age nine with his older brother. The two arrived as unaccompanied minors and were mistakenly enrolled at the Oneida Baptist Institute in Kentucky, a strict reform school rather than the elite academy their family had intended.

Life there was difficult. Huang has described cleaning bathrooms, facing bullying, and adapting to an environment where resilience was essential.

Those experiences shaped a mindset that would later define his leadership.

Huang often summarizes that philosophy simply:

“People who can suffer are ultimately the ones that are the most successful.”

After reuniting with his family in Oregon, Huang graduated from Aloha High School at sixteen, later earning a B.S. in electrical engineering from Oregon State University and a master’s degree in electrical engineering from Stanford University.

Before starting Nvidia, he worked as an engineer at Advanced Micro Devices (AMD) and LSI Logic, developing early experience in semiconductor design and graphics processors.

Those years helped form the technical instincts that would guide his later strategic decisions.

Founding Nvidia at a Denny’s

In 1993, Huang co-founded Nvidia alongside engineers Chris Malachowsky and Curtis Priem.

The idea was sketched during conversations at a Denny’s restaurant in San Jose, where the founders discussed a rapidly emerging technical challenge: rendering increasingly complex 3D graphics for personal computers.

The early years were unstable.

At one point in the late 1990s Nvidia nearly collapsed following a failed partnership with Sega. The company survived only after pivoting quickly and releasing the RIVA 128 graphics processor, which helped stabilize its financial position.

But Huang’s ambitions extended far beyond gaming graphics.

He believed GPUs possessed a deeper architectural advantage.

Unlike traditional CPUs designed for sequential tasks, GPUs excel at massively parallel computation — the ability to process thousands of operations simultaneously.

That design would eventually prove ideal for machine learning and modern AI infrastructure platforms like those explored in The Invisible Infrastructure Layer Reshaping Enterprise AI: Inside Glean’s Context Platform Bet.

The Strategic Bet on Accelerated Computing

The most important strategic decision in Nvidia’s history came in 2006, when the company introduced CUDA, a platform allowing developers to program GPUs for general-purpose computing tasks.

At the time, the move seemed unconventional.

Graphics chips were widely viewed as specialized hardware for graphics rendering rather than a core component of mainstream computing infrastructure.

Huang saw something different.

Machine learning systems rely heavily on matrix mathematics — exactly the type of workload GPUs handle efficiently.

By opening its architecture to developers, Nvidia created an entire ecosystem built around accelerated computing.

That ecosystem would become one of Nvidia’s most powerful advantages.

Today, CUDA remains deeply embedded in the global AI development community.

The rise of AI-native developer tools and infrastructure has further reinforced this ecosystem advantage, as explored in our analysis of Cursor’s $2B ARR Explosion Signals the Arrival of Agentic Developer Infrastructure.

The Founder Who “Bet the Company”

Huang’s leadership has repeatedly involved large strategic risks.

During the 2008 financial crisis, Nvidia’s stock price collapsed by nearly 80 percent. Rather than retreating, Huang doubled down on long-term platform development, continuing to invest heavily in GPU computing and AI infrastructure.

The decision would later prove decisive.

As artificial intelligence research accelerated during the 2010s, Nvidia’s GPUs became the dominant hardware platform for training neural networks.

Companies developing frontier AI models — including OpenAI, Google, Meta, and Anthropic — increasingly relied on Nvidia’s hardware architecture.

In doing so, Huang positioned Nvidia at the compute foundation of the modern AI industry.

The Founder Philosophy Behind Nvidia

Huang’s leadership philosophy blends engineering rigor with a distinctive founder mindset shaped by resilience and long-term thinking.

Across interviews, lectures, and keynote talks, several core ideas consistently emerge.

First-Principles Thinking

Huang encourages teams to reason from fundamental technical realities rather than analogies or trends. This approach has shaped many of Nvidia’s most important decisions.

Platform Strategy

Rather than building isolated products, Nvidia focuses on platforms that enable entire ecosystems of developers. CUDA exemplifies this philosophy.

Long-Term Vision

Huang frequently emphasizes that innovation emerges from “a thousand small breakthroughs.” Many of Nvidia’s most important technologies required years of patient investment before producing visible results.

Talent Environment

Huang describes his leadership goal as creating the conditions where exceptional engineers can do the most meaningful work of their careers. Nvidia’s organizational culture reflects that focus on technical excellence.

Nvidia at the Center of the AI Economy

The rapid expansion of generative AI has fundamentally altered the economics of computing.

Training frontier AI systems now requires enormous clusters of specialized processors connected through high-speed networks, pushing technology companies to invest billions of dollars into next-generation data-center infrastructure.

This emerging infrastructure layer is increasingly becoming the operational backbone of enterprise AI platforms — a transformation examined in our deep dive into The Invisible Infrastructure Layer Reshaping Enterprise AI: Inside Glean’s Context Platform Bet.

In that environment, control over the compute layer has become one of the most strategically valuable positions in the technology industry.

Training a frontier AI model today may require tens of thousands of GPUs operating simultaneously inside hyperscale data centers.

This transformation has reshaped Nvidia’s economic role in the technology sector.

The company now operates at the intersection of several enormous markets:

- AI model training

- data-center infrastructure

- robotics and simulation platforms

- high-performance computing

- autonomous systems

Some industry estimates suggest global spending on AI infrastructure could exceed $600 billion over the coming decade.

Much of that infrastructure currently runs on Nvidia hardware.

Competition Is Intensifying

Nvidia’s dominance has triggered an aggressive response from competing technology companies.

Several firms are developing alternative AI accelerators, including:

- Google’s Tensor Processing Units (TPUs)

- Amazon’s Trainium and Inferentia chips

- AMD’s MI-series accelerators

- custom AI chips designed by cloud providers

Despite this competition, Nvidia continues to benefit from an enormous ecosystem advantage.

Developers around the world have built AI software stacks optimized for CUDA and Nvidia GPUs. Rewriting those systems for alternative hardware often requires substantial engineering effort.

That network effect has become one of Nvidia’s strongest competitive moats.

The Founder’s Vision of the AI Future

Huang frequently describes artificial intelligence as the beginning of a new industrial computing era.

In his view, companies and nations will soon operate “AI factories” — massive infrastructure systems designed to generate intelligence at industrial scale.

He predicts several emerging technological shifts:

- agentic AI systems capable of autonomous reasoning

- robotics powered by simulation-driven AI training

- AI systems operating across both digital and physical environments

Accelerated computing will remain central to all of them.

Editorial Takeaway

Few founders have reshaped the technical foundations of an entire industry as profoundly as Jensen Huang.

By recognizing early that GPUs could power a new class of accelerated computing systems, he positioned Nvidia beneath nearly every major AI platform now emerging across the global technology ecosystem.

As artificial intelligence moves from research labs into the infrastructure of economies and governments, the importance of that computational foundation will only grow.

Huang did not simply build a semiconductor company.

He helped build the infrastructure layer of the AI era itself.

Research Context

This article synthesizes information from company disclosures, semiconductor industry research, Nvidia keynote presentations, and long-form interviews including Acquired Podcast, Stanford GSB lectures, and technology reporting published between 2023 and March 2026.

Editorial Note

TechFront360 publishes independent analysis on founders, startups, and infrastructure shaping the global artificial intelligence and deep technology ecosystem.