Inside the shift from model experimentation to operational AI systems

AI adoption has reached a phase where reliability matters more than novelty.

The announcement that Portkey raised $15 million in a Series A led by Elevation Capital, with participation from Lightspeed, is not simply another LLMOps funding event. It is a signal that the industry is reorganizing around a new layer: the AI control plane.

The Portkey funding round highlights a growing category known as AI control plane infrastructure, a layer designed to manage reliability, governance, and cost across production AI systems.

For several years, frontier AI progress was measured by model capability. Increasingly, it is measured by operational stability, governance, and cost visibility.

Portkey sits directly inside that transition.

From Model Performance to Operational Reliability

Early enterprise AI deployments focused on experimentation: prompt quality, latency optimization, and vendor selection.

That phase is ending.

As AI systems move into customer support, underwriting, developer workflows, and agentic automation, the primary risks shift toward infrastructure:

- Silent API failures

- Provider volatility and rate limits

- Unpredictable token spend

- Governance gaps around agent actions

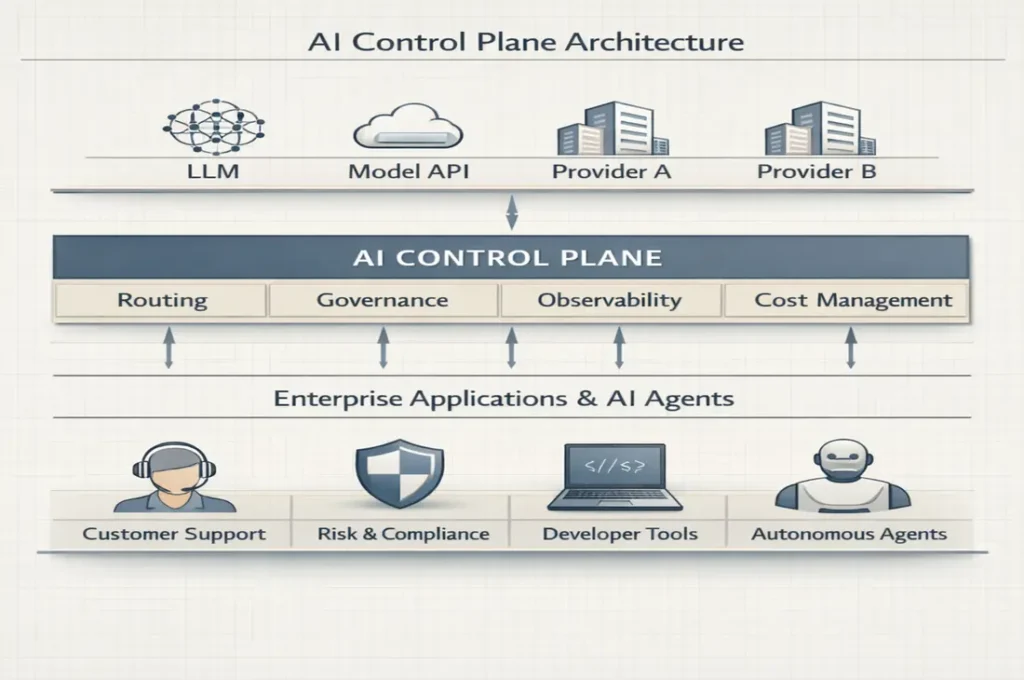

This is precisely the layer Portkey targets. Its unified control plane functions as an in-path AI gateway that routes requests, enforces policy, tracks spend, and provides observability across models.

In infrastructure terms, the company is positioning itself as an orchestration and governance layer for multi-model environments.

Unlike model providers competing on capability, control plane platforms compete on reliability, policy enforcement, and operational visibility.

The Scale Signals Matter More Than the Funding

The funding amount is modest by frontier AI standards. The operating metrics are not.

Across public disclosures and partner reporting, Portkey claims:

- 500B+ tokens processed daily

- ~120–125M requests per day

- ~$500K daily AI spend managed

- ~$180M annualized spend under governance

- 24,000+ organizations using the platform

- Support for 1,600+ models and 60+ providers

These throughput metrics position Portkey within the emerging AI infrastructure stack rather than the application layer. This transition mirrors how vertical AI platforms such as Harvey began receiving infrastructure-style valuation premiums.

These numbers indicate something structurally important: AI governance is becoming a high-throughput infrastructure problem rather than a developer tooling feature.

Control is scaling alongside intelligence. The category remains early, with no dominant standard yet, which allows control plane platforms to shape how enterprise AI deployment frameworks are defined.

The business model reflects this shift. Control plane platforms typically monetize through usage-based infrastructure pricing, enterprise contracts, and governance tooling layers that sit directly inside production workflows, creating recurring revenue tied to operational dependency rather than experimentation.

Why the “Control Plane” Narrative Is Emerging

The control plane concept is borrowed from cloud infrastructure, where orchestration layers coordinate distributed systems.

AI now requires a similar abstraction.

Model ecosystems are volatile. Pricing changes without warning. Models deprecate. Agent frameworks evolve faster than enterprise procurement cycles.

Enterprises need a buffer layer that absorbs that volatility.

Portkey’s positioning reflects a broader industry pattern visible across platforms building traffic routing, observability, evaluation, and budget governance. A small cohort of infrastructure startups is converging around this layer, reinforcing the emergence of governance as a standalone AI category rather than a feature. A similar capital pattern was visible during the recent AI capital repricing cycle across frontier infrastructure companies.

This is the infrastructure phase of AI.

Agentic AI Accelerates the Need for Governance

The next trigger is agent autonomy.

When AI agents gain permission to access systems, spend money, and take actions, infrastructure requirements expand from monitoring to enforcement.

Portkey’s roadmap — identity boundaries, permission systems, budget guardrails, and day-zero support for model changes — mirrors the shift from passive analytics toward active control.

Consider a customer support agent issuing refunds across thousands of transactions overnight. Without a control plane, the failure is discovered after the loss. With one, policy prevents the action before it propagates. The difference is not intelligence. It is operational containment.

That shift reframes LLMOps as policy infrastructure. The companies that own this layer shape how enterprises safely deploy autonomous systems.

A Subtle Geographic Signal

Portkey reflects a pattern emerging across global AI infrastructure: geographic diversification of operational layers. This geographic fragmentation is reshaping infrastructure strategy across continents.

Frontier models remain concentrated in the United States. Sovereign infrastructure is expanding in Europe. Application experimentation is global. Operational tooling is increasingly distributed.

Control planes sit in a unique position because they are vendor-agnostic. That neutrality allows them to become ecosystem anchors.

Infrastructure neutrality historically compounds into strategic leverage.

What Investors Are Actually Pricing

Series A funding for an LLMOps platform suggests investors are underwriting duration rather than velocity.

The logic is straightforward:

If enterprises standardize governance early, switching costs increase.

If switching costs increase, infrastructure vendors persist.

If vendors persist, revenue duration becomes predictable.

This is infrastructure math, not SaaS growth math.

Elevation’s involvement aligns with a longer-horizon thesis: platforms that sit inside operational workflows often mature into category standards.

The thesis carries risk, because governance layers can be bypassed if model providers vertically integrate similar controls.

The deeper contrarian possibility is that control planes may matter more than models over time. Model capability is becoming abundant, but operational trust remains scarce. If enterprises standardize around governance layers first, those layers begin to shape which models are usable, how agents behave, and where budgets flow.

In that scenario, infrastructure vendors quietly become gatekeepers of AI deployment economics, influencing demand without owning the intelligence itself. The history of cloud suggests this outcome is plausible.

The Real Competitive Layer Is Becoming Invisible

The most important infrastructure rarely competes on visibility.

Users do not notice orchestration layers when they work. They only notice when they fail. Similar to cloud load balancers and identity layers, the most critical AI infrastructure may sit between systems rather than inside them. That positioning makes the control plane less visible than models, but potentially more durable than them. The same invisible infrastructure dynamic is shaping frontier model competition itself, particularly in enterprise deployment layers.

Portkey’s core narrative — “AI that never breaks” — reflects an emerging enterprise expectation: intelligence is assumed; reliability is differentiating.

This mirrors earlier cloud transitions where uptime, governance, and cost visibility became the primary decision criteria.

Strategic Implications for the AI Stack

Portkey’s raise reinforces several structural shifts:

- Multi-model environments are becoming default

- Governance is moving into runtime infrastructure

- Finance teams are entering AI procurement decisions

- Agent deployment requires permission frameworks

- Vendor neutrality is becoming a defensibility strategy

This shift explains why many frontier startups begin behaving like infrastructure earlier than previous cycles.

Collectively, these trends indicate that AI infrastructure is thickening.

The stack is no longer model → application.

It is model → orchestration → governance → application → agent execution.

Control planes sit at the center.

The Larger Signal: AI Has Become a Managed System

The most important insight behind the funding is conceptual.

AI is no longer a capability organizations experiment with. It is a system they must operate.

Operating systems require reliability layers.

Reliability layers require standards.

Standards create infrastructure leaders.

Portkey is positioning itself inside that sequence.

Whether it becomes a category anchor remains uncertain. What is clearer is the direction of the market: AI’s next phase is operational, not experimental.

The AI stack is quietly reorganizing around control. The companies that decide how intelligence is allowed to behave may ultimately matter more than the ones that create it. The next wave of AI consolidation may occur around governance standards rather than model breakthroughs.

Research Context: Based on company disclosures, investor statements, industry reporting, and infrastructure positioning trends across enterprise AI deployment.

Editorial Note: This analysis evaluates structural implications of LLMOps funding rather than reporting funding events.