SINGAPORE — March 18, 2026

Video Rebirth, a Singapore-based AI infrastructure startup operating in the emerging category of industrial video generation systems, has raised $80 million to build a physics-native AI engine that operates at the simulation layer of creative production.

A new layer of artificial intelligence is emerging inside video generation — one that shifts the problem from visual realism to physical coherence.

This is not an upgrade to video generation — it is a redefinition of what video actually is.

For the first time, AI video is being built not as media — but as infrastructure.

The round includes backing from AMD Ventures, Hyundai Motor Group (via ZER01NE), Feedback Ventures, and CJ Group — alongside strategic investors spanning entertainment, mobility, and enterprise AI.

But the capital is not the story.

The story is what Video Rebirth is trying to replace.

What is being replaced is not a toolchain — but an entire production paradigm built on approximation rather than simulation.

From Generative Video to Physical Systems

Most AI video platforms today optimize for:

- realism

- cinematic quality

- short-form generation

But they break under a deeper constraint: physics inconsistency.

Objects drift. Lighting shifts unnaturally. Motion lacks causal continuity.

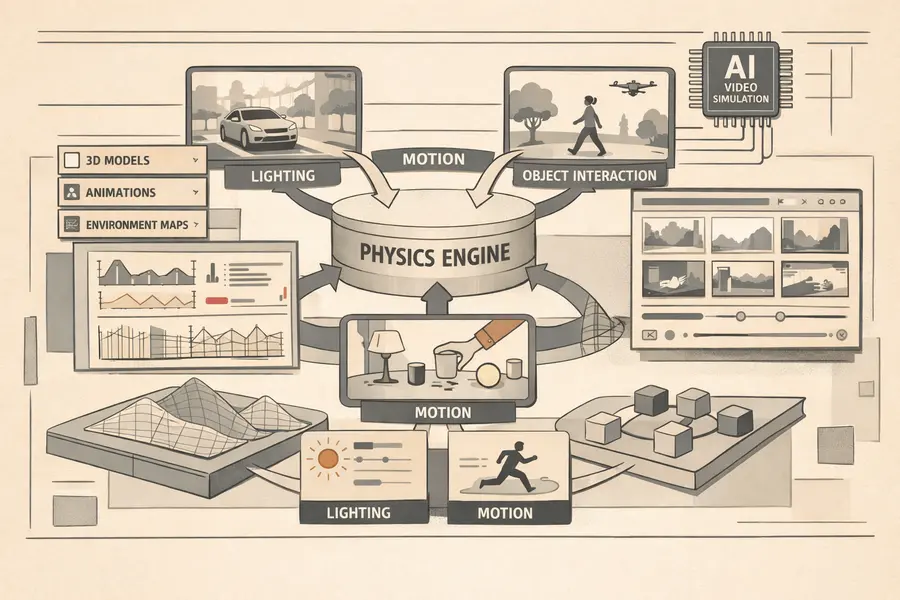

Video Rebirth is built around a different premise:

Video is not just pixels — it is a simulation of the physical world.

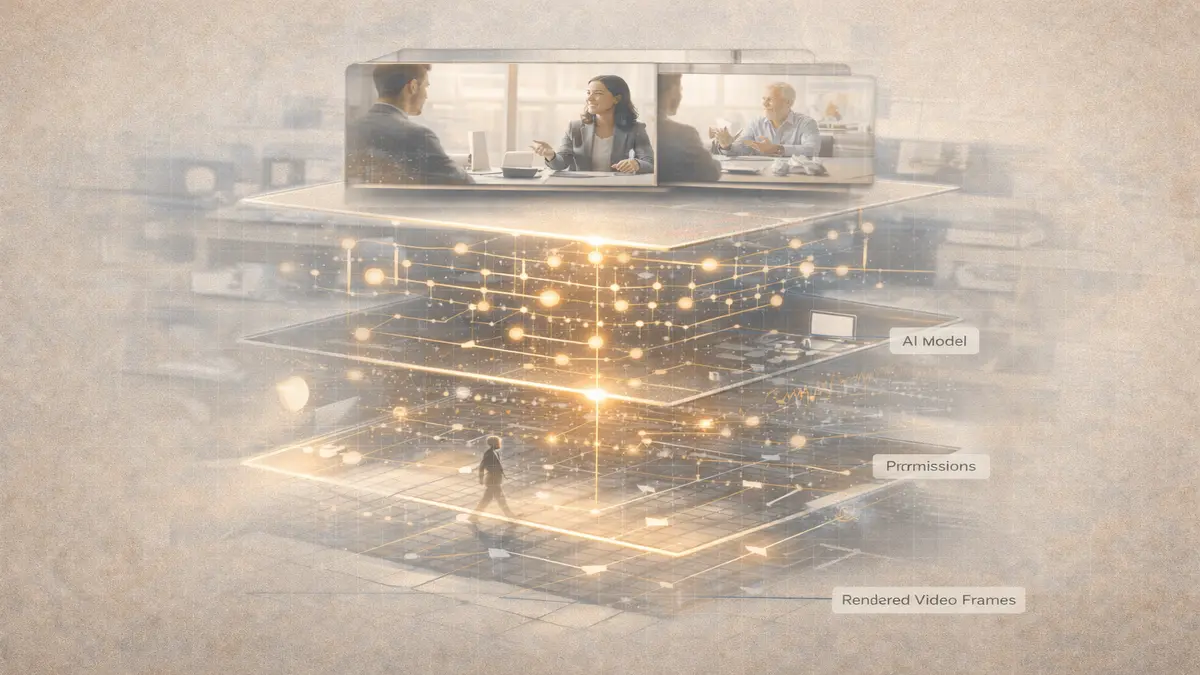

Its core product, the BACH engine, is designed not as a creative tool — but as an infrastructure layer for generating coherent, controllable, real-world video environments.

This reflects a broader shift already visible across AI systems.

Generation is giving way to structured orchestration — as explored in

The New AI Layer Reshaping Creative Work.

Why This Moment Matters

The timing of Video Rebirth’s raise reflects a broader inflection point in AI video.

Early-generation models solved for realism.

Second-generation systems improved control.

But neither solved for physical consistency at scale.

That gap is now becoming economically significant.

Particularly in industries where simulation — not storytelling — defines value.

This mirrors a broader shift across AI infrastructure, where capability is no longer the bottleneck — coordination and system design are, as explored in

The Next AI Breakthrough Is Expertise, Not Models.

The Core Stack: Physics as the Differentiator

At the center of Video Rebirth’s architecture are three core innovations:

1. Physics Native Attention (PNA)

A system designed to model:

- lighting behavior

- shadows

- object interactions

- spatial consistency

Instead of approximating reality, it attempts to simulate it.

2. Dual DiT (Diffusion Transformer)

Enables:

- precise prompt control

- shot consistency across sequences

- stable scene transitions

This addresses a fundamental limitation in current models:

lack of continuity between frames

3. Multi-Step Sampling Loss (MSSL)

A proprietary approach that enables:

- native 30fps generation

- fluid motion without post-processing

- production-ready output

This is a critical unlock.

Because most existing systems still rely on:

→ editing

→ interpolation

→ rendering pipelines

Video Rebirth is attempting to eliminate that layer entirely.

The Strategic Positioning: Industrial, Not Creative

Unlike platforms such as OpenAI’s Sora or Runway, which focus on cinematic storytelling and creative tooling, Video Rebirth is positioning itself as:

infrastructure for production and simulation

Its target markets include:

- advertising production

- film and animation pipelines

- e-commerce content generation

- interactive gaming environments

- physical AI training simulations

This last category is not adjacent — it is central.

Why Hyundai Invested

Hyundai Motor Group’s involvement signals a deeper use case:

training physical AI systems inside simulated environments

If Video Rebirth can generate:

- physically consistent environments

- real-world motion dynamics

- causal interactions

Then its platform becomes:

→ not just a content tool

→ but a training layer for robotics and autonomous systems

This aligns with a broader infrastructure shift where simulation becomes foundational to AI development — a pattern increasingly visible across capital flows into AI systems, as analyzed in

The $3B AI Funding Wave Reshaping Infrastructure, Agents, and Robotics.

The Founder Thesis

The company is led by Wei Liu, former Tencent Distinguished Scientist and IEEE/AAAS Fellow — a profile that places the company closer to deep infrastructure builders than traditional creative AI startups.

Alongside co-founders from Tencent AI and G42, the team is building around a clear thesis:

The future of video is not rendering — it is real-time world generation.

They describe this transition as:

“de-engineering” content creation

Meaning:

- no cameras

- no rendering pipelines

- no physical production constraints

Just:

→ fully controllable, interactive digital environments

The Competitive Landscape: A Misaligned Market

The AI video market is not saturated — it is structurally fragmented.

The competitive landscape is not crowded — it is directionally fragmented.

Each major player is optimizing for a different layer of the stack:

- Runway → creative tooling

- OpenAI (Sora) → narrative realism

- Luma AI → motion fidelity

- Kuaishou (Kling) → scalable content

But none are fully optimized for:

physics-consistent, production-ready simulation

Video Rebirth is targeting that gap.

From Content to Simulation Infrastructure

What Video Rebirth is building is not just another AI video model.

It is part of a deeper transition:

From:

→ generating content

To:

→ generating environments

This mirrors shifts already visible across AI:

- copilots → agents

- tools → systems

- outputs → workflows

And now:

- video → world models

Early Signals and Strategic Momentum

The structure of the funding round is itself strategic:

- AMD → compute infrastructure

- Hyundai → physical AI + simulation

- CJ Group → entertainment + IP ecosystems

This creates a rare alignment:

compute + simulation + distribution

If executed correctly, Video Rebirth is not just building a tool.

It is building a platform layer across multiple industries.

The Strategic Control Point

The company’s long-term positioning can be reduced to a single insight:

The future of video will not be defined by:

- resolution

- realism

- generation speed

It will be defined by:

how accurately systems can simulate reality

Because that determines:

- usability

- scalability

- economic value

The Opportunity Ahead

Video Rebirth remains early:

- $80M total funding

- globally distributed team with Tencent/G42 pedigree

- early-stage product exposure

- no large-scale public benchmarks yet

But its thesis aligns with a deeper structural shift:

AI is moving from generating media → to generating worlds.

If that transition holds, then:

The most valuable platforms will not be those that create content

But those that define the rules of reality inside digital environments

Live Update Signal

This article will be updated as Video Rebirth releases product benchmarks, enterprise pilots, or new partnerships.

Research Context

This report synthesizes primary-source data from PR Newswire, company disclosures, and official product materials released March 18, 2026.